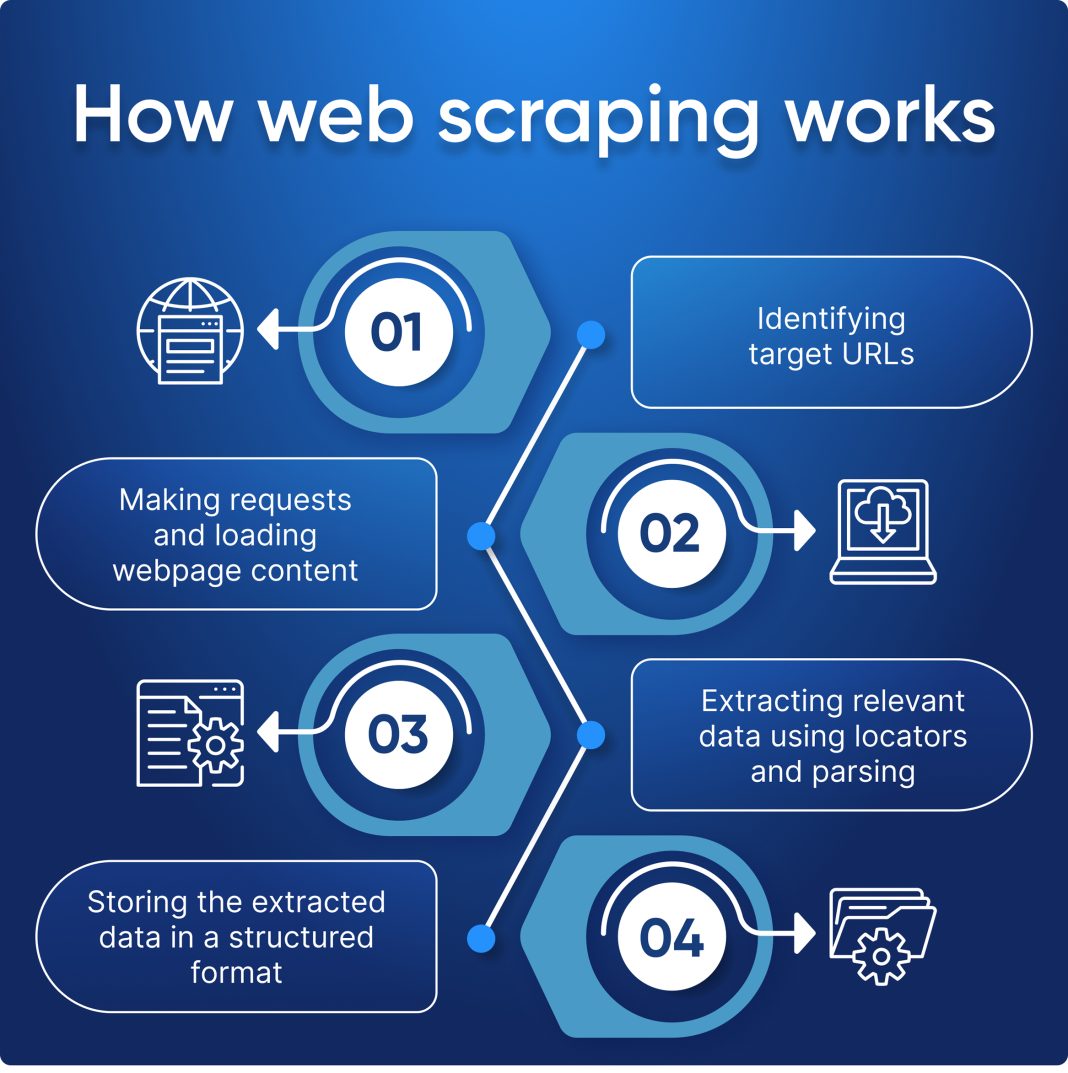

Web scraping is an invaluable technique for extracting data from websites, utilized across various industries for competitive analysis, research, and data insight. By employing effective web scraping techniques, businesses can harness vast amounts of information, making it a powerful tool for those looking to enhance their decision-making processes. One popular approach is Python web scraping, which leverages libraries like Beautiful Soup and Scrapy for efficient data extraction. However, it is crucial to engage in ethical web scraping practices to comply with the terms of service and protect against legal repercussions. Whether you’re developing data extraction tools or conducting competitor analysis via web scraping, understanding the nuances of this practice is essential for success.

The practice of gathering data from online sources through automated methods is often referred to as data harvesting or web data extraction. This skill allows users to pull valuable insights from across the internet, which can be particularly beneficial for market analysis and trend forecasting. Techniques employed in this domain can vary from simple data collection methods to complex automated systems utilizing programming languages like Python. Engaging in responsible and ethical data sourcing is paramount to ensure compliance with online protocols and to maintain the integrity of the information gathered. As more businesses recognize the significance of online data, mastering techniques like competitor analysis web scraping becomes essential for strategic planning.

Understanding Web Scraping Techniques

Web scraping techniques encompass a variety of methods used to extract data from websites, and understanding these techniques is vital for anyone interested in data extraction. The simplest method involves utilizing a browser extension or simple scripts that fetch the HTML content of web pages. More advanced techniques involve writing custom scripts using programming languages like Python, which allows for automated data collection processes. Popular libraries such as Beautiful Soup and Scrapy enable developers to navigate through HTML hierarchies and pull out specific data points, making Python a go-to choice for many web scraping enthusiasts.

Additionally, web scraping techniques can be categorized into two main types: front-end scraping and back-end scraping. Front-end scraping involves accessing the rendered HTML content directly from the user interface, which is crucial for websites utilizing AJAX for dynamic content loading. On the other hand, back-end scraping involves interacting with the server’s APIs, which often provide structured data more efficiently. Understanding these distinctions equips web scrapers to choose the appropriate method based on their project needs.

The Importance of Ethical Web Scraping

Ethical web scraping is an essential consideration for anyone looking to extract data from the internet. Adhering to principles of ethical scraping means respecting the website’s terms of service and robots.txt file, which outlines what data can legally be scraped. Responsible web scraping ensures that websites can function without harm or interruption, preserving the integrity of their services. Data scrapers should avoid extracting data excessively or using it in a way that could destabilize the website, such as overloading servers with rapid requests.

Moreover, ethical web scraping extends beyond compliance with legal standards; it also relates to how the extracted data is used. For instance, using scraped data for malicious purposes, like spamming or identity theft, is not only unethical but potentially illegal. Therefore, web scrapers should aim to use data for legitimate purposes, such as market research, competitor analysis, or improving user experience. By prioritizing ethical practices in web scraping, users contribute to a healthier ecosystem where data sharing benefits all parties involved.

Leveraging Python for Effective Web Scraping

Python has become a leading language for web scraping due to its simplicity and the vast array of libraries available. Libraries like Beautiful Soup and Scrapy provide robust tools that simplify the process of navigating web pages and extracting relevant data elements. With Beautiful Soup, users can easily parse HTML and XML documents, allowing for quick identification of necessary data points through its intuitive API. On the other hand, Scrapy offers a comprehensive framework for larger web scraping projects, enabling automatic data extraction and storage.

Using Python for web scraping also integrates well with data analysis. Once data is extracted, it can be processed, analyzed, and visualized using additional Python libraries like Pandas or Matplotlib. This allows for a seamless workflow, where users can source data, analyze trends, and make informed decisions based on collected insights. As Python continues to dominate the web scraping landscape, its applications stretch from academic research to commercial data strategies, making it an invaluable tool for analysts and developers alike.

Exploring Data Extraction Tools for Scraping

Data extraction tools play a crucial role in the web scraping process by providing the necessary features to automate data collection efficiently. There are various tools available, each catering to different scraping needs, such as Octoparse, ParseHub, and Import.io. These tools often come with user-friendly interfaces that minimize the need for coding, allowing users without programming expertise to extract data easily.

Many data extraction tools also support API connections, which can enhance the scraping process. By integrating with APIs, users can access structured data directly instead of relying on parsing HTML, which can change frequently and lead to broken scrapers. This feature is particularly beneficial for businesses looking to gain insights from competitor analysis or market trends without the hassle of maintaining complex scripts. Overall, choosing the right data extraction tool depends on the specific requirements of the web scraping project, including the scale, complexity, and data types being targeted.

Conducting Competitor Analysis Through Web Scraping

Competitor analysis web scraping is an invaluable strategy for businesses looking to stay ahead in their respective markets. By scraping data from competitors’ websites, businesses can gather insights into pricing, product offerings, customer reviews, and overall market positioning. This information can highlight opportunities and weaknesses, allowing companies to make data-driven decisions and optimize their strategies. For example, by analyzing competitors’ pricing models through scraped data, businesses can adjust their pricing strategies to remain competitive.

Moreover, web scraping can significantly reduce the time and effort involved in gathering competitive intelligence. Traditional methods of collecting this data often require manual research, which is not only time-consuming but can also result in outdated information. With web scraping, businesses can set up automated processes to acquire real-time data that keeps them informed of market changes. This continuous flow of information supports proactive decision-making, helping companies adapt quickly to shifts in consumer behavior or market dynamics.

Best Practices for Responsible Web Scraping

Implementing best practices for responsible web scraping is vital for ensuring compliance with legal and ethical standards while maximizing the effectiveness of data collection efforts. One fundamental practice includes respecting the website’s robots.txt file, which indicates the sections of the site that are off-limits for scraping. Additionally, adhering to the appropriate request rate is crucial; sending too many requests in a short period can lead to IP bans and disrupt website functionality.

Another best practice involves cleaning and processing the data post-extraction to ensure that it is accurate and usable. This might include removing duplicates, filling in missing values, and standardizing formats. Furthermore, users should document their scraping processes to maintain transparency and accountability, which is especially important when data is shared or published. Overall, a strategic approach to web scraping not only enhances the integrity of the collected data but also fosters a respectful relationship between scrapers and website owners.

The Role of HTML Parsing in Web Scraping

HTML parsing is a fundamental aspect of the web scraping process, as it involves breaking down the HTML structure of web pages to extract specific data. Effective HTML parsing allows developers to isolate and retrieve vital information such as product details, prices, and user reviews. Libraries like Beautiful Soup in Python excel at parsing HTML and offer versatile functions that can navigate tags, attributes, and structures easily. Understanding how to parse HTML properly is essential for anyone looking to develop efficient web scrapers that consistently deliver quality data.

Moreover, mastering the intricacies of HTML parsing equips developers with the skills necessary to handle diverse web layouts. Since websites may employ various HTML designs and formatting techniques, an adept web scraper must adjust their parsing strategies accordingly. This adaptability ensures the scraper can accurately extract data from both simple and complex website structures. Consequently, a strong grasp of HTML parsing is not only beneficial for competitive intelligence but also for enhancing the overall effectiveness of data-driven projects.

Challenges of Web Scraping and How to Overcome Them

Web scraping presents various challenges that can hinder the effectiveness of data extraction efforts. One of the most common challenges is dealing with websites that utilize advanced anti-scraping measures, such as CAPTCHAs, IP blocking, and JavaScript rendering. These methods aim to protect the site’s data and hinder automated bots from scraping content. To overcome these obstacles, web scrapers may need to employ techniques such as rotating IP addresses, using headless browsers, or incorporating machine learning algorithms to bypass these defenses.

Another significant challenge is the potential legal issues surrounding web scraping activities. As data privacy laws continue to evolve, scraping certain types of data may lead to compliance risks. For instance, extracting personal information from websites without consent can result in serious legal repercussions. To mitigate these risks, scrapers should conduct thorough research on the relevant laws and regulations in their areas and ensure they acquire data ethically. Additionally, adhering to a website’s terms of service provides guidance on acceptable scraping practices, fostering a more responsible approach to data extraction.

Future Trends in Web Scraping Technology

The future of web scraping technology is poised for evolution, with advancements in artificial intelligence and machine learning playing a significant role. These technologies have the potential to enhance the efficiency of data extraction processes by automating more complex scraping tasks, such as dynamically rendering JavaScript-heavy websites. As AI algorithms improve, they can learn to navigate various web structures intelligently, streamlining the scraping process and reducing the need for manual adjustments.

Furthermore, the rising focus on ethical data practices and compliance with privacy regulations is shaping the development of web scraping tools. Developers are now incorporating features that allow users to ensure compliance with legal standards, such as built-in checks for robots.txt files and user consent mechanisms. This evolution reflects the growing acknowledgment of the importance of ethical web scraping in building trust and maintaining positive relationships within the digital ecosystem.

Frequently Asked Questions

What are the most popular web scraping techniques?

Some of the most popular web scraping techniques include using libraries such as Beautiful Soup, Scrapy, and Selenium in Python, which allow for efficient data extraction from websites. These techniques help in automating the collection of information, making it easier to gather data for analysis or reporting.

What is ethical web scraping and why is it important?

Ethical web scraping involves following a website’s terms of service, respecting the robots.txt file, and avoiding excessive requests that could overwhelm a server. This practice is crucial as it maintains data integrity and ensures compliance with legal guidelines, protecting both the scraper and the website owner.

How can I get started with Python web scraping?

To start with Python web scraping, you should install libraries like Beautiful Soup or Scrapy. Familiarize yourself with making HTTP requests using libraries like Requests, then learn how to parse HTML and extract data efficiently. Numerous tutorials and documentation are available online to help you through the process.

What are some reliable data extraction tools for web scraping?

Reliable data extraction tools for web scraping include ParseHub, Octoparse, and Import.io. These tools provide user-friendly interfaces, enabling users to scrape data without extensive coding knowledge. Additionally, they often offer cloud-based services for easier management of large datasets.

How can web scraping be used for competitor analysis?

Web scraping can be instrumental in competitor analysis by allowing businesses to collect data on competitor pricing, product offerings, and market trends. By scraping competitors’ websites, companies can gather valuable insights, track changes in the market, and adjust their strategies accordingly.

| Key Points |

|---|

| Definition of Web Scraping: The process of extracting data from websites. |

| Languages Used: Python, JavaScript, Ruby, PHP. |

| Python Libraries: Beautiful Soup, Scrapy. |

| Applications: Data analysis, research, competitor monitoring. |

| Ethical Considerations: Adhere to website terms of service and robots.txt. |

| Respect Data Policies: Essential for ethical scraping practices. |

Summary

Web scraping is a valuable tool for gathering data from online sources efficiently. It allows businesses and researchers to automate data collection, which supports data analysis, research, and competitive intelligence. To maximize the benefits of web scraping, it is crucial to use appropriate programming languages and libraries, such as Python’s Beautiful Soup and Scrapy. However, one must navigate the ethical landscape by respecting the rules set by websites and adhering to their terms of service. This responsible approach not only fosters good practices in web scraping but also enhances the credibility and integrity of the data collected.