Web scraping is a powerful technique that allows individuals and businesses to programmatically extract data from websites with ease. By utilizing various web scraping tools, including popular libraries in Python like Beautiful Soup and Scrapy, users can streamline their data extraction processes. This method involves sending requests to web servers to fetch HTML content, which is then parsed to find specific data points. As the world becomes increasingly data-driven, mastering data extraction techniques through effective web scraping has become essential for many across multiple industries. However, while leveraging these tools, it is critical to respect websites’ robots.txt files and terms of service to navigate potential legal challenges.

Data harvesting from web pages, often referred to as web data extraction, entails gathering information from online resources through automated means. With the rise of analytics and data-driven decision-making, various strategies and frameworks like Python scripts and the powerful Beautiful Soup and Scrapy libraries have emerged to facilitate this process. Learning these scraping methodologies can give you a significant edge in obtaining real-time data efficiently and effectively. As you dive into this digital realm, you’ll uncover numerous tutorials on Scrapy that provide valuable insights into mastering these capabilities. Remember, adhering to ethical guidelines when scraping ensures a smooth and successful data gathering operation.

Understanding Web Scraping and Its Applications

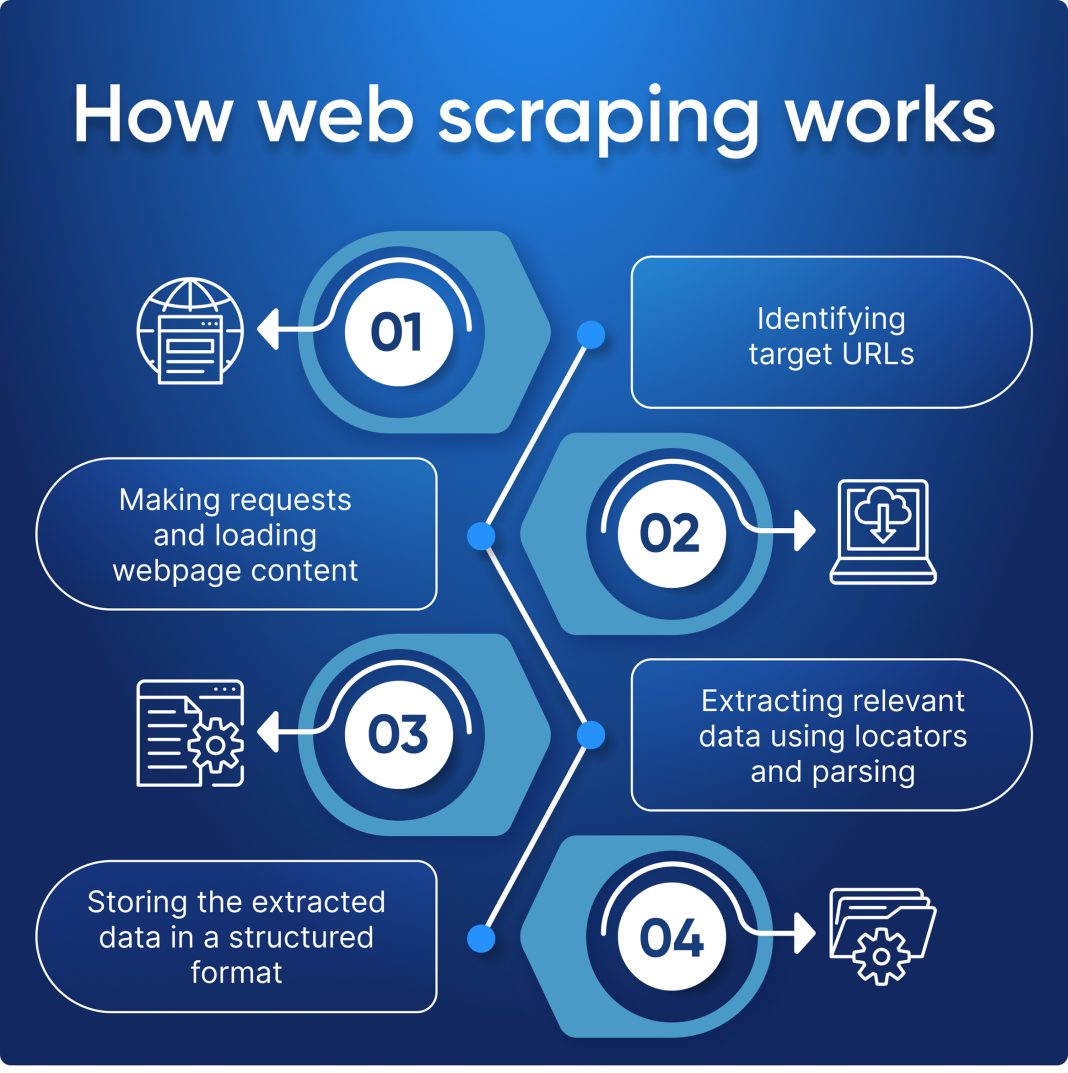

Web scraping is the process of programmatically i extracting data from websites. As the digital landscape continues to expand, businesses and developers are leveraging web scraping to gather insights, track market trends, and monitor competitors. This data extraction technique allows users to convert unstructured information found on the web into structured formats, facilitating analysis and decision-making.

From e-commerce sites to news portals, the applications of web scraping are vast. Organizations utilize collected data to enhance customer experience, optimize pricing strategies, and personalize marketing efforts. Moreover, industries such as finance and research rely heavily on web scraping for analysis and forecasting, showcasing the crucial role this technique plays in today’s data-driven environment.

Essential Web Scraping Tools and Libraries

When it comes to web scraping, choosing the right tools is critical for operational efficiency and success. Python stands out as one of the most popular languages for web scraping due to its simplicity and powerful libraries. Two noteworthy libraries in the Python ecosystem are Beautiful Soup and Scrapy. Beautiful Soup is ideal for beginners, offering an intuitive way to parse HTML and XML documents, allowing users to easily navigate through the data.

On the other hand, Scrapy is a more sophisticated framework designed for large-scale web scraping projects. It provides built-in support for handling requests, managing data pipelines, and even storing output in various formats. Both tools come with comprehensive Scrapy tutorials and documentation to help users harness their full potential, making them indispensable for anyone looking to master web scraping.

Data Extraction Techniques in Web Scraping

Data extraction techniques are the backbone of effective web scraping. To begin, one must understand how to send requests to web servers and retrieve HTML content efficiently. This often involves using libraries such as Requests in Python, which allows developers to easily interact with web pages and obtain the necessary data. Once the HTML content is fetched, the next step is parsing it.

Parsing is where tools like Beautiful Soup become invaluable. They allow programmers to search through the HTML structure seamlessly and retrieve specific data points or elements desired from a webpage. Efficient data extraction techniques are crucial for successful scraping, particularly when navigating complex websites with dynamic content or frequently changing structures.

Legal Considerations When Scraping Websites

While web scraping can provide valuable data, it’s essential to navigate the legal landscape surrounding this practice carefully. Before scraping any website, one must review the site’s robots.txt file, which dictates what areas of the website are off-limits to bots. Additionally, complying with the website’s terms of service is crucial to avoid potential legal repercussions.

Failure to adhere to these guidelines could result in a ban from that website or, in severe cases, legal action. Therefore, it’s advisable for web scrapers to operate within ethical boundaries and develop a good understanding of the legal intricacies involved in the data extraction process. A compliance-first approach can help maintain a good relationship with website owners while still acquiring the needed data.

Common Challenges in Web Scraping

Web scraping comes with its share of challenges that can hinder the data extraction process. One common issue is handling CAPTCHAs, which are implemented by websites to prevent automated scraping. These security measures can block scraping scripts, making it imperative for developers to find creative workarounds, such as using services that can solve CAPTCHAs automatically.

Additionally, changing website structures can disrupt scraping efforts. Websites often update their HTML layouts, which can result in scraping scripts breaking. To combat this, it’s vital to build flexible and adaptive scraping solutions that can adjust to minor changes over time. Regular maintenance and updates to scraping scripts can help ensure data extraction remains uninterrupted and accurate.

Best Practices for Effective Web Scraping

To maximize the effectiveness of web scraping efforts, adhering to best practices is essential. One of the primary guidelines is to respect the target website’s traffic, which can be achieved by pacing the scraping process to avoid overwhelming servers. Implementing delays between requests and ensuring the scraper operates during off-peak hours can minimize the risk of being blocked.

Another best practice involves using user agents to mimic a standard web browser, enhancing the chances of successful data retrieval and reducing the likelihood of detection by anti-scraping measures. Additionally, establishing a multi-threaded scraping approach can accelerate the data collection process, provided it is done responsibly and ethically.

Web Scraping for Market Research

Market research is one of the primary applications of web scraping, allowing businesses to gather significant insights about competitors, industry trends, and customer preferences. By scraping product listings, reviews, and pricing from e-commerce websites, companies can make informed decisions about their own offerings and marketing strategies. Such data is invaluable for identifying gaps in the market and tailoring products to meet consumer demand.

Moreover, web scraping tools can be used to track social media sentiment and analyze feedback from customers. By consolidating this data, businesses can adapt their strategies to improve customer satisfaction and foster loyalty. Given the dynamic nature of today’s market, leveraging web scraping for market research empowers organizations to stay ahead of the competition.

The Future of Web Scraping Technologies

As technology evolves, so too does the landscape of web scraping. Innovations in artificial intelligence and machine learning are set to transform how data is extracted and analyzed. These advancements will likely lead to more sophisticated crawling algorithms that can better understand website structures and contextualize content, making scraping not only faster but more accurate.

Additionally, improved tools and libraries are emerging, offering users enhanced capabilities in data analysis and visualization. The trend towards automation in web scraping will reduce the need for manual intervention, allowing businesses and developers to focus their efforts on interpreting data rather than gathering it. As we look to the future, the synergy of web scraping with other emerging technologies will shape how data drives decision-making across industries.

Frequently Asked Questions

What is web scraping and how is it used?

Web scraping is the process of programmatically extracting data from websites. It is used to gather information from various online sources for purposes such as data analysis, market research, and content aggregation.

What are some popular web scraping tools available?

Some popular web scraping tools include Python libraries like Beautiful Soup and Scrapy. These tools enable developers to easily send HTTP requests, parse HTML content, and extract relevant data.

How can I get started with Python web scraping?

To get started with Python web scraping, you can install libraries like Beautiful Soup and requests. Follow online tutorials to understand how to send requests to websites, fetch their HTML, and parse the data you need.

What is Beautiful Soup and how does it aid in web scraping?

Beautiful Soup is a Python library that simplifies the process of parsing HTML and XML documents. It allows users to navigate and search the parse tree easily, making it a popular choice for web scraping projects.

What are Scrapy tutorials and how can they help beginners?

Scrapy tutorials provide step-by-step guidance on using the Scrapy framework for web scraping. They help beginners understand how to create spiders, manage requests, and extract data efficiently.

What are some effective data extraction techniques in web scraping?

Effective data extraction techniques include using CSS selectors, XPath, and regular expressions to identify and extract specific elements from HTML documents. These methods improve the precision of the data collected during web scraping.

Is it legal to use web scraping on websites?

The legality of web scraping depends on the website’s terms of service and robots.txt file. Always check these before scraping to ensure compliance and avoid legal issues.

How do I handle pagination while web scraping?

To handle pagination during web scraping, you can programmatically follow ‘Next’ page links or manipulate the URL parameters to extract data from multiple pages systematically.

Can web scraping affect a website’s performance?

Yes, aggressive web scraping can affect a website’s performance by overwhelming its server with too many requests. It’s important to implement respectful scraping practices like rate limiting and adhering to rules set in the robots.txt file.

What challenges do I face while using web scraping tools?

Common challenges in web scraping include dealing with JavaScript-heavy websites, handling CAPTCHAs, managing dynamic content, and ensuring your scraper is durable against changes in the website’s structure.

| Key Point | Description |

|---|---|

| Definition | Web scraping is the programmatic extraction of data from websites. |

| Methods | Common methods include using Python libraries like Beautiful Soup and Scrapy. |

| Process | The typical process involves sending a request to a web server to fetch HTML content, followed by parsing to extract desired data. |

| Legal Considerations | It is crucial to respect the website’s robots.txt file and terms of service to avoid legal implications. |

Summary

Web scraping is a powerful technique for extracting data from the web. As businesses continue to rely on data-driven decisions, the importance of web scraping grows. By employing the right tools and methods, such as Python libraries like Beautiful Soup and Scrapy, users can efficiently gather data while adhering to legal guidelines. Understanding the web scraping process, including fetching HTML and parsing data, is essential for any aspiring web scraper.