Web scraping is a powerful technique used for data extraction from web pages, enabling users to efficiently gather vast amounts of information from various online platforms. By employing effective web scraping techniques, individuals and businesses can automate data collection processes, saving time and resources. Various tools for web scraping, such as BeautifulSoup and Scrapy, make it accessible for users at all skill levels. However, ethical web scraping practices must be adhered to in order to avoid legal repercussions and maintain the integrity of data sources. This web scraping tutorial aims to guide you through the essentials of scraping responsibly while maximizing your data acquisition potential.

The process of harvesting data from websites, often referred to as data mining or web crawling, plays a crucial role in the modern digital landscape. This method involves utilizing automated software to navigate through HTML content and retrieve valuable insights from a multitude of online sources. Leveraging various scraping methods and employing best practices ensures that users can gather information ethically while complying with relevant regulations. In this guide, we will cover fundamental aspects of using data extraction tools effectively, providing a comprehensive overview suitable for both novices and experienced users alike.

Defining Web Scraping and Its Importance

Web scraping, at its essence, is the automated method used to gather data from websites. This process is crucial for various sectors including marketing, finance, and research, as it allows users to easily collect large amounts of data from multiple sources, saving time and effort compared to manual data collection. The significance of web scraping lies in its ability to provide insights and analytics, enabling businesses to make informed decisions based on real-time data.

Furthermore, understanding the nuances of web scraping is essential in today’s data-driven landscape. With the exponential growth of online content, relying solely on traditional data collection methods can lead to inefficiencies. By utilizing web scraping techniques, organizations can harness the power of the web, extracting valuable datasets that can be utilized for competitive analysis, trend spotting, and consumer behavior studies.

Key Web Scraping Techniques Explained

There are fundamentally two approaches to web scraping: static and dynamic scraping. Static scraping is applicable to web pages that showcase fixed content. In this scenario, scrapers can efficiently retrieve data without the need for complex script execution. This method is straightforward and often quicker, as the HTML structure remains consistent and predictable.

On the other hand, dynamic scraping addresses the complexities introduced by JavaScript-driven content. Many modern websites leverage JavaScript to load or modify content after the main HTML page has been rendered. In such cases, scrapers must utilize headless browsers or tools that can execute JavaScript, thereby ensuring that the necessary data is loaded and accessible for extraction.

Popular Tools for Effective Web Scraping

When it comes to implementing web scraping, several tools and libraries stand out for their functionality and ease of use. Tools like BeautifulSoup and Scrapy are widely recognized for their ability to parse HTML and XML documents, providing convenient methods for navigating through the data. Users appreciate these platforms for their user-friendly syntax and robust community support.

Additionally, Puppeteer is a powerful library that provides a high-level API for controlling headless Chrome or Chromium browsers. This makes it particularly useful for dynamic scraping, as it allows users to capture screen snapshots and manipulate web pages just like a regular user would. Each of these tools serves a unique purpose, catering to different scraping needs based on the complexity of the target website.

Ethics and Legalities in Web Scraping

Navigating the ethical landscape of web scraping is paramount for those engaged in data extraction. While the allure of gathering vast datasets is appealing, it’s crucial to consider the implications of one’s actions. Respecting a website’s ‘robots.txt’ file, which outlines the rules governing automated access, is a foundational step to ensure compliance with legal standards.

Moreover, adhering to copyright laws and a site’s terms of service is essential in maintaining ethical scraping practices. Engaging in web scraping without consent can lead to legal repercussions and damage an organization’s reputation. Therefore, a thorough understanding of both ethical guidelines and legal frameworks is necessary for anyone looking to utilize web scraping effectively.

Learning Resources: Web Scraping Tutorial Suggestions

For those interested in diving deeper into web scraping, numerous tutorials and resources are available online that can provide step-by-step guidance. These tutorials cover everything from the basics of web scraping to advanced techniques using various programming languages. Comprehensive guides often include practical examples, making it easier for beginners to grasp complex concepts.

Additionally, engaging with forums and communities focused on web scraping can enhance the learning experience. Sites like Stack Overflow and dedicated web scraping subreddits can be invaluable resources for troubleshooting and exchanging tips with fellow scrapers, helping to foster a culture of sharing knowledge and best practices.

The Future of Web Scraping Technology

As technology continues to evolve, the field of web scraping is likely to witness significant advancements. Emerging technologies like artificial intelligence and machine learning could be integrated into scraping tools, enhancing their efficiency and capability. These innovations may allow for smarter data extraction methodologies, capable of understanding context and extracting nuances from unstructured data.

In addition, the growing focus on data privacy may lead to stricter regulations governing web scraping practices. As organizations increasingly prioritize consumer trust, understanding and adapting to these changes will be essential for web scraper developers and users alike. The interplay between evolving technology and regulatory frameworks will shape the future landscape of web scraping.

Integrating Web Scraping into Data Strategies

Incorporating web scraping into broader data strategies can greatly enhance an organization’s ability to leverage competitive intelligence. By systematically collecting data from various online sources, businesses can gain insights into market trends, customer preferences, and competitor activities. This proactive approach enables companies to stay ahead in rapidly changing industries.

Additionally, the combination of web scraping with data analytics tools allows for deeper insights and trend analysis. Organizations can create dashboards and visualizations that synthesize scraped data, aiding in decision-making and strategic planning. This fusion of technologies not only amplifies the power of data but also enhances overall business intelligence.

Best Practices for Effective Web Scraping

To ensure a successful web scraping process, it is essential to follow best practices that optimize efficiency and reduce errors. First and foremost, maintaining clear documentation of your scraping logic and methods is vital for future reference or for when adjustments are needed. Additionally, respecting the request rate to avoid overwhelming the server is crucial in maintaining good relations with the website owners.

Another important aspect is testing your scrapers regularly. Websites often update their layouts, which can break your scraping script. By frequently validating that your scrapers perform as expected, you can ensure the reliability of the data you collect. Incorporating these best practices will enhance the robustness and effectiveness of your web scraping endeavors.

Challenges in Web Scraping and How to Overcome Them

While web scraping offers numerous benefits, it is not without its challenges. One common hurdle is dealing with websites that employ anti-scraping measures, such as CAPTCHAs, IP blocking, or requiring user authentication. Overcoming these barriers requires utilizing techniques like rotating proxies or headless browsers to mimic human behavior.

Additionally, maintaining data accuracy can be a significant challenge, especially when scraping data from dynamically changing web pages. Implementing robust error handling and fallback mechanisms can help ensure that scrapers effectively account for inconsistencies in the source data. By being aware of these challenges and preparing contingency plans, web scrapers can enhance their success rates and data reliability.

Frequently Asked Questions

What is web scraping and how does it work?

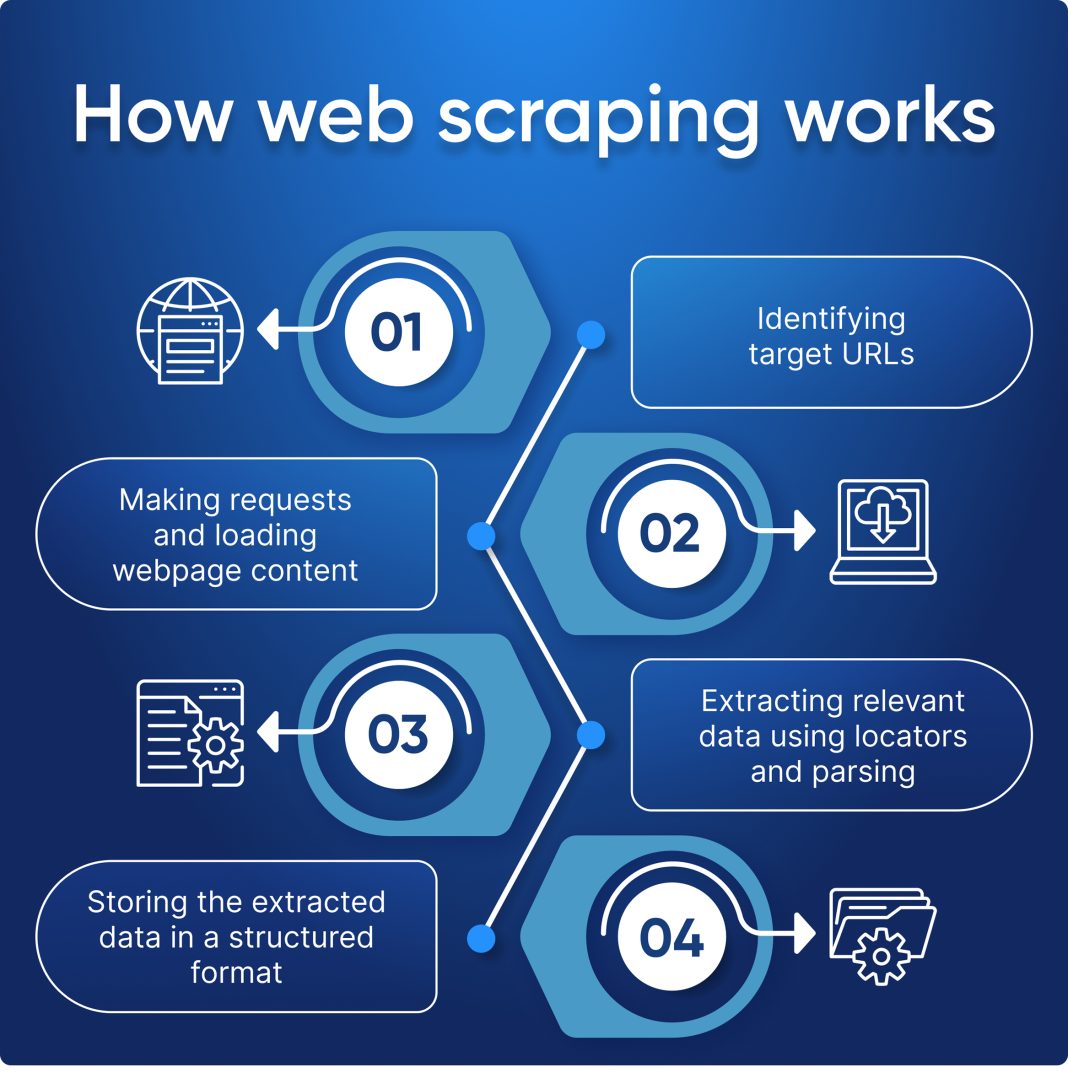

Web scraping is the automated process of extracting data from websites using a scraper that makes HTTP requests to fetch HTML content and parse it to extract needed information.

What are common web scraping techniques?

Common web scraping techniques include static scraping, used for unchanging web content, and dynamic scraping, which involves executing scripts to retrieve data from websites that load content dynamically with JavaScript.

What are the best tools for web scraping?

Some of the best tools for web scraping include libraries like BeautifulSoup for parsing HTML, Scrapy for building scraping applications, and Puppeteer for automating web browsers to scrape dynamic content.

Is ethical web scraping possible?

Yes, ethical web scraping is possible by adhering to legal guidelines such as checking a site’s robots.txt file, respecting copyright, and following the terms of service to avoid potential legal issues.

Where can I find a web scraping tutorial?

You can find web scraping tutorials online through educational platforms, coding bootcamps, or dedicated tech blogs that cover popular tools and techniques for effective data extraction.

| Section | Key Points |

|---|---|

| What is Web Scraping? | Automated process of extracting data from websites using a scraper to fetch and parse HTML content. |

| Techniques Used in Web Scraping | 1. Static Scraping: For static web content. 2. Dynamic Scraping: For dynamically loaded content using JavaScript. |

| Common Tools for Web Scraping | Tools like BeautifulSoup, Scrapy, and Puppeteer are popular for scraping. |

| Legal and Ethical Considerations | Check robots.txt and respect copyright laws and terms of service when scraping. |

Summary

Web scraping is a highly valuable technique for gathering data from the internet efficiently. It involves using automated programs to extract pertinent information from websites. However, it is crucial to navigate the legal and ethical landscape surrounding web scraping, ensuring compliance with relevant laws and respect for site policies. By utilizing the right tools and techniques, individuals and businesses can significantly enhance their data collection efforts.