HTML content scraping is an essential technique used by developers and data analysts to extract valuable information from web pages. By utilizing this method, one can efficiently scrape website content to acquire data for analysis, research, or digital marketing purposes. Notably, effective HTML parsing allows users to dissect the structure of a webpage and extract HTML elements with precision. With the rise of web scraping tools, data extraction from various online sources has become quicker and more straightforward, empowering businesses to make data-driven decisions. In this digital age, mastering HTML content scraping can significantly enhance a company’s ability to harness actionable insights from the vast amounts of information available online.

When we talk about extracting data from websites, several related terms come to mind, such as web data harvesting and internet content collection. The process involves using specialized software and techniques to retrieve specific pieces of information from online platforms, often through the use of web crawlers or data mining tools. This indirect approach to gathering data ensures users can systematically analyze large volumes of information with ease. Whether it’s for competitive analysis, market research, or enriching databases, understanding the nuances of content retrieval is crucial in today’s data-centric environment. As organizations continue to embrace digital transformation, the importance of effective strategies for data extraction cannot be understated.

Understanding HTML Content Scraping

HTML content scraping refers to the process of automatically extracting data from web pages using a script or program, commonly known as a web scraper. This technique is crucial for data extraction, enabling users to gather information from websites for various purposes, such as research, market analysis, and competitive intelligence. By leveraging tools designed to parse HTML, users can effectively scrape website content without any manual copying and pasting, saving time and reducing human error.

An HTML parser is an essential component in the scraping process, transforming the raw HTML code into a structured format that is easier to work with. This structure allows for the identification of specific elements on a webpage, such as headings, paragraphs, or tables, facilitating selective data extraction. Whether you’re looking to gather product prices from e-commerce sites or compile news articles from various sources, understanding HTML content scraping is the first step toward mastering data extraction.

Techniques for Web Scraping

Web scraping techniques can vary in complexity, from using simple code snippets to advanced frameworks that automate the entire process. For basic data extraction, tools like Beautiful Soup or Scrapy may be employed, allowing the user to write Python scripts that navigate through HTML structures. By selecting the appropriate tags and attributes, users can scrape website content efficiently, focusing on the exact data they need without sifting through unnecessary information.

In contrast, more complex web scraping projects may utilize headless browsers or APIs for data extraction. These methods enable scrapers to interact with websites as if they were human users, enabling the retrieval of dynamic content that is typically loaded after the initial HTML page is served. By employing a combination of techniques, scrapers can tackle a variety of websites and ensure thorough data extraction, expanding the scope of their research or analysis.

Leveraging Data Extraction for Business Growth

Data extraction via scraping is not only for individual projects but has significant implications for businesses as well. Companies can utilize scraped data to monitor competitor prices, analyze market trends, or even gather user reviews, providing valuable insights that help shape their strategies. By harnessing the power of web scraping, businesses can make data-driven decisions that enhance their operational efficiency and improve customer satisfaction.

Moreover, staying compliant with web scraping regulations is crucial for businesses, as scraping too aggressively or collecting data without permission can lead to legal issues. By implementing ethical scraping practices, such as respecting robots.txt files and rate limiting requests, companies can benefit from data extraction without compromising their integrity or facing potential penalties.

Best Practices for Using HTML Parsers

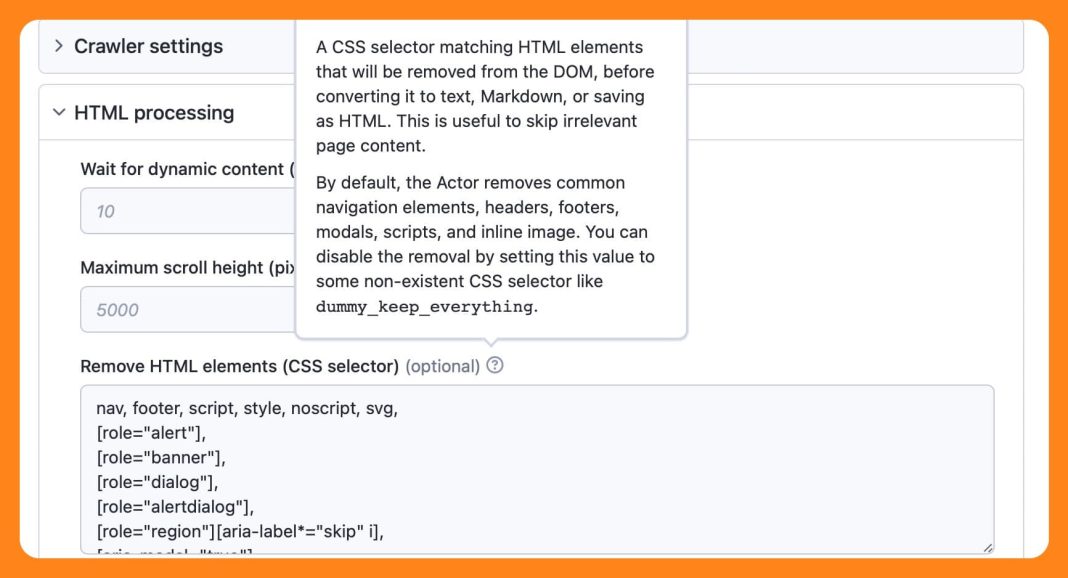

When engaging in web scraping, employing best practices for HTML parsers can significantly improve the quality of the extracted data. It’s important to select the right tools that align with the complexity of the target website’s structure. For instance, when parsing HTML, utilizing libraries like lxml or HtmlAgilityPack can enhance performance, especially when dealing with large datasets, as these libraries are optimized for speed and reliability.

Furthermore, ensuring that your extraction logic accounts for potential changes in the website’s HTML structure is vital. Websites often undergo updates that can alter the design or layout, which can disrupt scraping scripts. Consequently, maintaining flexibility in your extraction logic, including robust error handling and updates to your scraping strategy as needed, will ensure efficient and ongoing data retrieval.

Common Challenges in Web Scraping

Web scraping presents a range of challenges, particularly when it comes to dealing with anti-scraping measures implemented by websites. Many sites deploy tactics such as CAPTCHAs, IP blocking, or dynamic content loading that can hinder the scraping process. To overcome these obstacles, scrapers can employ techniques like rotating IP addresses or incorporating CAPTCHA solvers, although it’s essential to do this within ethical and legal boundaries.

Another common challenge is data inconsistency resulting from changes in website design. Despite a scraper performing brilliantly one day, a minor HTML modification may render it ineffective the next. To mitigate this issue, it’s advisable to build scrapers that can adapt quickly to such changes, possibly through the use of machine learning algorithms that recognize patterns in data formatting.

Ethics and Legal Considerations in Scraping

With the rise of web scraping, ethical and legal considerations have become increasingly important. While scraping can provide valuable data, it is crucial to respect the terms of service of the websites being scraped. Many sites explicitly prohibit automated data extraction in their terms. Engaging in scraping without permission can lead to legal repercussions, including lawsuits or being permanently barred from accessing the website.

In addition to legal considerations, ethical scraping practices involve being mindful of the impact on the website’s server. Excessive scraping can lead to significant traffic that may degrade the user experience for others. Therefore, it’s advisable to implement practical limits on the number and frequency of requests, ensuring that your scraping activities do not unduly burden the website or infringe on the rights of data owners.

Tools and Technologies for Scraping

Numerous tools and technologies are available for web scraping, each tailored to different user needs and technical capabilities. Some popular options include open-source libraries such as Beautiful Soup, which simplifies HTML parsing with Python, and Scrapy, a robust framework specifically designed for large-scale web scraping projects. These tools provide user-friendly interfaces and handle various aspects of data extraction, including navigating through HTML and managing request headers.

For non-programmers, browser-based scraping tools like ParseHub or Octoparse offer visual interfaces to help users create scraping workflows without writing code. These platforms often provide built-in functionalities that allow users to easily configure how to extract data from pages, making it accessible for those less familiar with programming principles. Each tool has its strengths, so selecting one that aligns with your scraping goals is essential to successful data extraction.

Future Trends in Web Scraping

The future of web scraping is poised for growth and innovation as data becomes increasingly central to business operations. As technology advances, more sophisticated tools with integrated AI capabilities are being developed, allowing for enhanced data extraction processes that can adapt to dynamic web environments. Machine learning algorithms can help in extracting unstructured data more efficiently, transforming it into structured datasets with minimal user input.

Moreover, as data privacy regulations become more stringent, the focus on ethical scraping practices will intensify. Organizations will need to find innovative ways to gather data compliantly, such as leveraging APIs provided by companies that want to share their data legitimately. The evolution of web scraping technologies will undoubtedly shape the future landscape of data extraction, driving businesses toward more responsible and effective practices.

Conclusion: Mastering HTML Content Scraping

Mastering HTML content scraping requires not only an understanding of the technical aspects involved but also the ethical considerations that accompany the practice. As the demand for data continues to escalate, honing your web scraping skills will prove invaluable in navigating digital landscapes efficiently. By employing the best tools and methodologies, you can extract relevant information while maintaining compliance with legal standards.

Ultimately, effective HTML content scraping is about striking a balance between utilizing technology and adhering to ethical guidelines. As you delve deeper into data extraction, remember that the key to successful scraping lies not just in the data you collect, but also in the principles of respect and integrity in your approach.

Frequently Asked Questions

What is HTML content scraping and how does it work?

HTML content scraping is the process of extracting data from websites using tools or scripts that parse the HTML structure. By identifying and isolating specific HTML elements, like tags or attributes, users can efficiently gather information, such as text and images, from web pages for various purposes like data analysis or business intelligence.

What tools are best for scraping website content?

There are several popular tools for scraping website content, including Python libraries like Beautiful Soup and Scrapy, as well as browser extensions and automated software like ParseHub and Octoparse. These tools utilize HTML parsers to navigate the document structure, allowing users to automate the data extraction from web pages.

Is it legal to engage in HTML content scraping?

The legality of HTML content scraping varies depending on the website and the specific circumstances. It’s crucial to review a website’s terms of service and respect its robots.txt file to ensure compliance. While scraping public data may be permissible, it’s essential to adhere to legal guidelines and practices to avoid potential issues.

How can I extract HTML effectively for web data extraction?

To extract HTML effectively for web data extraction, you can use programming languages like Python, JavaScript, or PHP along with libraries that support HTML parsing. Utilizing regex or libraries like Beautiful Soup allows for efficient parsing of HTML markup, making it easier to pinpoint and extract targeted data from a web page.

What are the challenges of HTML scraping and how can I overcome them?

Challenges of HTML scraping include inconsistent website structures, dynamic content loaded via JavaScript, and anti-scraping measures like CAPTCHAs. Overcoming these challenges involves using advanced scraping techniques, such as headless browsers like Puppeteer, and implementing delays between requests to mimic human behavior.

How do I choose the right HTML parser for content scraping?

Choosing the right HTML parser for content scraping depends on the programming language you’re using and your specific requirements. Popular choices include Beautiful Soup for Python, jsoup for Java, and Cheerio for Node.js. Factors to consider are ease of use, performance, and compatibility with the website’s HTML structure.

What are the best practices for scraping website content?

Best practices for scraping website content include respecting the website’s terms of use, managing request rates to avoid overloading servers, using user-agent strings to mimic browsers, and implementing error handling mechanisms in your scripts. Additionally, keep your scraping activities ethical and transparent.

What data can I scrape from HTML content?

You can scrape a variety of data from HTML content, including text, images, links, tables, and metadata. Specific extraction depends on the HTML structure of the web page. By targeting the right HTML elements and attributes, you can gather structured data for analysis or storage.

Can I scrape dynamic websites using HTML content scraping methods?

Yes, you can scrape dynamic websites using HTML content scraping methods, but it may require additional techniques. For instance, using headless browsers or tools that can handle JavaScript rendering, like Selenium or Puppeteer, allows you to access and extract content that is populated dynamically after the initial HTML is loaded.

| Key Point | Description |

|---|---|

| Request for Information | The request is for detailed information based on HTML input. |

| HTML Requirement | A valid HTML document is necessary to scrape the content effectively. |

| Content Extraction | The extraction process is reliant on the HTML structure provided. |

Summary

HTML content scraping is a process that allows users to extract information from web pages efficiently. To achieve accurate results, you need to provide the HTML structure from which data will be scraped. This ensures that you can extract relevant details systematically and effectively, leading to better data management and utilization.