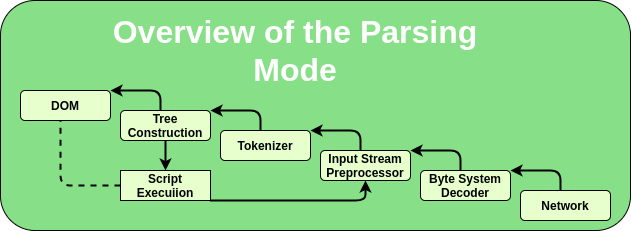

HTML document extraction is a critical technique in the realm of web scraping, as it allows users to glean valuable information from web pages efficiently. By utilizing HTML parsing methods, developers can systematically analyze the complex structure of an HTML document, identifying essential elements such as titles, body content, images, and metadata. The process of extracting content from HTML ensures that only pertinent data is captured while disregarding insubstantial information. Employing advanced web scraping techniques facilitates thorough data extraction from HTML, enabling users to compile coherent and relevant content. With a focus on accurate HTML structure analysis, this method not only enhances the quality of extracted information but also optimizes it for various applications across digital platforms.

When discussing the retrieval of information from web pages, many refer to this as web content extraction or data mining from HTML. These terminologies encompass the methods used to delve into the intricacies of web documents and pull out significant details embedded within. Effective extraction strategies leverage various programming tools to navigate the HTML tree structure, enabling users to pinpoint crucial data efficiently. By addressing the specific arrangements of content like headers and images, web scrapers can optimize their results for smoother data ingestion. This approach not only streamlines the workflow but empowers users to harness the potential of online resources.

Understanding HTML Document Structure

To effectively extract detailed information from a post, it is essential to first analyze the structure of the HTML document. HTML, or Hypertext Markup Language, serves as the backbone of web content, organizing text, images, and other media elements that make up a webpage. A typical HTML structure comprises various elements including

for titles,

for paragraphs, for images, and additional attributes that provide context such as links and metadata. By understanding these components, we can prioritize information during the extraction process.

Moreover, analyzing the HTML structure allows data extraction from HTML to occur in an efficient and systematic manner. Each tag serves a distinct purpose in representing the content of the document, and a clear grasp of their function aids in accurately parsing the content. This foundational knowledge is vital for web scraping techniques, as it enables the scraper to distinguish between significant information and irrelevant details, ensuring that only coherent and valuable data is captured.

It is also important to consider the hierarchy of HTML elements. Elements like

and

provide subheadings under the main title, while

and

tags can list essential points. Understanding this hierarchy ensures that we extract information logically, preserving the context and meaning inherent in the content. This level of detail helps in creating richer datasets that can serve multiple purposes, whether for machine learning models or detailed reporting.

Additionally, an awareness of HTML document structure can lead to advanced data extraction techniques, allowing for more nuanced scraping methodologies. By leveraging tools and libraries that utilize this knowledge, developers and data analysts can better optimize their workflows, making it easier to sift through large amounts of web data effectively and efficiently.

Techniques for Extracting Content from HTML

Extracting content from HTML involves using a variety of methodologies, each designed to facilitate the parsing of web pages while considering the underlying HTML structure. One common technique is utilizing web scraping frameworks such as Beautiful Soup or Scrapy, which are tailored for Python. These tools allow users to navigate through HTML tags, extracting content by referencing the particular tags that denote the data they seek—like titles or articles.

Another crucial aspect of these web scraping techniques is the ability to handle dynamic content that may be rendered through JavaScript. Advanced scrapers can integrate practices like headless browsing to capture such dynamic elements. Additionally, proper handling of links and embedded media through attribute extraction ensures a comprehensive dataset that includes all necessary components of the webpage. By employing these techniques, data analysts can ensure that the extracted information is both relevant and representative of the original content.

With the rise of web content and its complexities, other techniques, such as XPath and CSS selectors, have emerged as pivotal in targeting specific data points within an HTML document. XPath, for instance, provides a way to navigate through elements and attributes in an XML-like manner, which can also be applied to HTML documents. This flexibility allows for targeted data extraction that can meet varied analytical needs.

As these techniques evolve, the focus should remain on ensuring that the data extracted is not only accurate but also adheres to ethical web scraping practices. This means respecting the site’s robots.txt file, which dictates how and to what extent content should be accessed. Observing these guidelines helps maintain the integrity of the extraction process and fosters a healthier relationship between content providers and data gatherers.

Best Practices for HTML Parsing

When engaging in HTML parsing, adhering to best practices is crucial to yield reliable results. One significant practice is to validate the HTML before attempting to scrape. Invalid HTML structures can lead to misinterpretations and unnecessary errors during parsing. Tools such as W3C Markup Validation Service can aid in ensuring that the HTML code adheres to standard practices, minimizing complications during content extraction.

Additionally, when parsing HTML, it is essential to focus on minimizing the risk of capturing ‘junk’ data. Implementing filters or conditions during the extraction process can help weed out irrelevant information, ensuring that the outcome is coherent and useful. This can be accomplished through regular expressions that match only necessary components or creating a schema to properly categorize the data you want to extract.

To optimize this process, modularizing the scraping scripts can enhance maintainability and scalability. Designing reusable parsing functions allows developers to adjust and expand their scraping capabilities without rewriting code from scratch, which is a common challenge in web development. Moreover, documenting the assumptions made during the parsing process helps other team members understand the rationale behind the code, promoting collaborative improvements.

Finally, it’s vital to keep up with the dynamic landscape of web technologies. Websites frequently update their layouts and structures, which can necessitate changes in the scraping strategy. Regularly reviewing and updating the parsing scripts according to these changes is essential to ensure consistent and effective data extraction from HTML.

Frequently Asked Questions

What is HTML document extraction and why is it important?

HTML document extraction involves analyzing the structure of HTML files to extract relevant content such as titles, articles, and media. This process is crucial for gathering structured data from the web efficiently, enabling better data organization and usability.

How do HTML parsing techniques facilitate extracting content from HTML?

HTML parsing techniques facilitate extracting content from HTML by breaking down the document into manageable components. By accurately identifying and handling tags like <h1> for titles and <p> for paragraphs, data extraction can be performed to gather only the information relevant to your needs.

What are the best practices for data extraction from HTML documents?

Best practices for data extraction from HTML documents include using reliable web scraping techniques, ensuring proper parsing of HTML tags, cleaning the extracted data to remove incoherent elements, and complying with legal standards to avoid issues with web scraping.

What role does HTML structure analysis play in web scraping?

HTML structure analysis plays a critical role in web scraping by enabling scrapers to efficiently navigate and extract data from differently structured web pages. Understanding how to interpret the structure allows for effective selection of valuable content while disregarding irrelevant data.

How can I ensure quality content during HTML document extraction?

To ensure quality content during HTML document extraction, focus on parsing important tags correctly, removing promotional or junk information, and checking for coherence in the extracted data. This way, you can compile high-quality content that meets your information needs.

What tools are recommended for effective HTML parsing and data extraction?

Recommended tools for effective HTML parsing and data extraction include libraries like Beautiful Soup and Scrapy for Python, which offer robust methods for parsing HTML documents and handling various web scraping tasks efficiently.

Is it legal to use web scraping techniques for HTML document extraction?

The legality of using web scraping techniques for HTML document extraction can vary based on website terms of service and local regulations. Always check website policies and consider ethical practices before proceeding with scraping.

What are some common challenges faced during HTML document extraction?

Common challenges during HTML document extraction include dealing with inconsistent HTML structures, dynamic content loaded via JavaScript, and navigating anti-scraping measures implemented by websites. Proper tools and strategies can help mitigate these issues.

Key Component

Description

Title

The main heading or subject of the document, marked by

tags.

Main Body

The core content to be extracted, typically enclosed in

tags.

Images and Media

Visual elements included via ![]() tags and may include videos or other media types.

tags and may include videos or other media types.

Metadata

Information about the document, such as author and publication date.

Summary

HTML document extraction is a vital process that involves analyzing and parsing the structure of an HTML document to collect detailed information. This process includes identifying key components like the title, main body, images, and metadata to ensure relevant and meaningful content is captured. By correctly parsing HTML tags, such as

for titles and

for paragraphs, the extraction process guarantees the integrity and coherence of the information collected. Additionally, it ensures that no promotional or incoherent elements are included, maintaining high content quality standards.

- and

- tags can list essential points. Understanding this hierarchy ensures that we extract information logically, preserving the context and meaning inherent in the content. This level of detail helps in creating richer datasets that can serve multiple purposes, whether for machine learning models or detailed reporting.

Additionally, an awareness of HTML document structure can lead to advanced data extraction techniques, allowing for more nuanced scraping methodologies. By leveraging tools and libraries that utilize this knowledge, developers and data analysts can better optimize their workflows, making it easier to sift through large amounts of web data effectively and efficiently.

Techniques for Extracting Content from HTML

Extracting content from HTML involves using a variety of methodologies, each designed to facilitate the parsing of web pages while considering the underlying HTML structure. One common technique is utilizing web scraping frameworks such as Beautiful Soup or Scrapy, which are tailored for Python. These tools allow users to navigate through HTML tags, extracting content by referencing the particular tags that denote the data they seek—like titles or articles.

Another crucial aspect of these web scraping techniques is the ability to handle dynamic content that may be rendered through JavaScript. Advanced scrapers can integrate practices like headless browsing to capture such dynamic elements. Additionally, proper handling of links and embedded media through attribute extraction ensures a comprehensive dataset that includes all necessary components of the webpage. By employing these techniques, data analysts can ensure that the extracted information is both relevant and representative of the original content.

With the rise of web content and its complexities, other techniques, such as XPath and CSS selectors, have emerged as pivotal in targeting specific data points within an HTML document. XPath, for instance, provides a way to navigate through elements and attributes in an XML-like manner, which can also be applied to HTML documents. This flexibility allows for targeted data extraction that can meet varied analytical needs.

As these techniques evolve, the focus should remain on ensuring that the data extracted is not only accurate but also adheres to ethical web scraping practices. This means respecting the site’s robots.txt file, which dictates how and to what extent content should be accessed. Observing these guidelines helps maintain the integrity of the extraction process and fosters a healthier relationship between content providers and data gatherers.

Best Practices for HTML Parsing

When engaging in HTML parsing, adhering to best practices is crucial to yield reliable results. One significant practice is to validate the HTML before attempting to scrape. Invalid HTML structures can lead to misinterpretations and unnecessary errors during parsing. Tools such as W3C Markup Validation Service can aid in ensuring that the HTML code adheres to standard practices, minimizing complications during content extraction.

Additionally, when parsing HTML, it is essential to focus on minimizing the risk of capturing ‘junk’ data. Implementing filters or conditions during the extraction process can help weed out irrelevant information, ensuring that the outcome is coherent and useful. This can be accomplished through regular expressions that match only necessary components or creating a schema to properly categorize the data you want to extract.

To optimize this process, modularizing the scraping scripts can enhance maintainability and scalability. Designing reusable parsing functions allows developers to adjust and expand their scraping capabilities without rewriting code from scratch, which is a common challenge in web development. Moreover, documenting the assumptions made during the parsing process helps other team members understand the rationale behind the code, promoting collaborative improvements.

Finally, it’s vital to keep up with the dynamic landscape of web technologies. Websites frequently update their layouts and structures, which can necessitate changes in the scraping strategy. Regularly reviewing and updating the parsing scripts according to these changes is essential to ensure consistent and effective data extraction from HTML.

Frequently Asked Questions

What is HTML document extraction and why is it important?

HTML document extraction involves analyzing the structure of HTML files to extract relevant content such as titles, articles, and media. This process is crucial for gathering structured data from the web efficiently, enabling better data organization and usability.

How do HTML parsing techniques facilitate extracting content from HTML?

HTML parsing techniques facilitate extracting content from HTML by breaking down the document into manageable components. By accurately identifying and handling tags like <h1> for titles and <p> for paragraphs, data extraction can be performed to gather only the information relevant to your needs.

What are the best practices for data extraction from HTML documents?

Best practices for data extraction from HTML documents include using reliable web scraping techniques, ensuring proper parsing of HTML tags, cleaning the extracted data to remove incoherent elements, and complying with legal standards to avoid issues with web scraping.

What role does HTML structure analysis play in web scraping?

HTML structure analysis plays a critical role in web scraping by enabling scrapers to efficiently navigate and extract data from differently structured web pages. Understanding how to interpret the structure allows for effective selection of valuable content while disregarding irrelevant data.

How can I ensure quality content during HTML document extraction?

To ensure quality content during HTML document extraction, focus on parsing important tags correctly, removing promotional or junk information, and checking for coherence in the extracted data. This way, you can compile high-quality content that meets your information needs.

What tools are recommended for effective HTML parsing and data extraction?

Recommended tools for effective HTML parsing and data extraction include libraries like Beautiful Soup and Scrapy for Python, which offer robust methods for parsing HTML documents and handling various web scraping tasks efficiently.

Is it legal to use web scraping techniques for HTML document extraction?

The legality of using web scraping techniques for HTML document extraction can vary based on website terms of service and local regulations. Always check website policies and consider ethical practices before proceeding with scraping.

What are some common challenges faced during HTML document extraction?

Common challenges during HTML document extraction include dealing with inconsistent HTML structures, dynamic content loaded via JavaScript, and navigating anti-scraping measures implemented by websites. Proper tools and strategies can help mitigate these issues.

| Key Component | Description |

|---|---|

| Title | The main heading or subject of the document, marked by

tags. |

| Main Body | The core content to be extracted, typically enclosed in

tags. |

| Images and Media | Visual elements included via |

| Metadata | Information about the document, such as author and publication date. |

Summary

HTML document extraction is a vital process that involves analyzing and parsing the structure of an HTML document to collect detailed information. This process includes identifying key components like the title, main body, images, and metadata to ensure relevant and meaningful content is captured. By correctly parsing HTML tags, such as

for titles and

for paragraphs, the extraction process guarantees the integrity and coherence of the information collected. Additionally, it ensures that no promotional or incoherent elements are included, maintaining high content quality standards.