Web Scraping Basics is a crucial skill for anyone looking to harness the power of data available on the internet. As our world evolves into a data-driven society, understanding web scraping can provide invaluable insights for personal and professional projects alike. By utilizing web scraping tools and effective data extraction methods, you can compile information ranging from market trends to competitor analysis effortlessly. In this guide, we will delve into the best practices for web scraping to ensure that your methods are both efficient and ethical. Additionally, we will provide web scraping tutorials to help you navigate through this fascinating technique with ease.

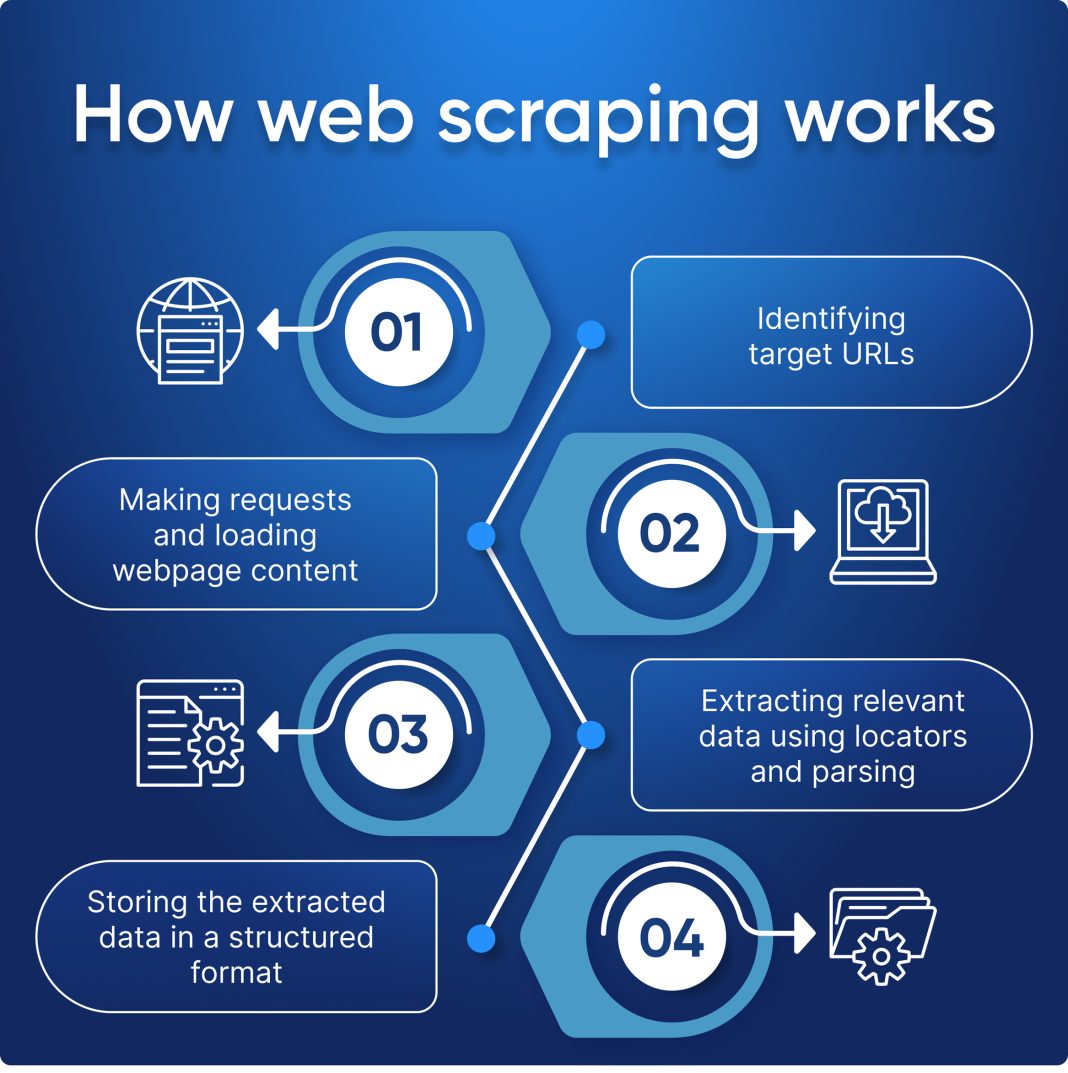

Diving into the fundamentals of extracting web data opens up a realm of opportunities for analysts and marketers alike. Often referred to as data harvesting or web data extraction, this process involves systematically retrieving and processing information from various online sources. From employing sophisticated software to simpler methods, the landscape of data collection is both diverse and essential for modern enterprise strategies. As we explore this topic, you’ll uncover the nuances of effective information collection and learn how to navigate the challenges associated with web content retrieval. Whether you are interested in market insights or aggregating content, mastering these techniques can significantly enhance your data strategy.

Understanding Web Scraping Tools

When diving into web scraping, it’s essential to familiarize yourself with the tools available. Popular options include Beautiful Soup, Scrapy, and Selenium, each catering to different scraping needs. Beautiful Soup is particularly favored for its ability to parse HTML and XML effortlessly, making it ideal for beginners who need to extract information from static web pages. On the other hand, Scrapy offers a more comprehensive framework suited for larger projects, allowing developers to build spiders that can navigate multiple pages simultaneously. Selenium shines in scenarios where websites employ dynamic content that changes based on user interactions, enabling you to automate a web browser to mimic human behavior.

Choosing the right web scraping tool often depends on the complexity of your project and your proficiency with programming. For those new to coding, starting with Beautiful Soup can help you grasp the foundational aspects of web scraping without overwhelming yourself. As you become more proficient, transitioning to Scrapy or incorporating Selenium can enhance your scraping capabilities, enabling you to tackle more sophisticated tasks. Ultimately, understanding the strengths and weaknesses of each tool will empower you to effectively extract and analyze data across various web platforms.

Best Practices for Web Scraping

Implementing best practices in web scraping is crucial for maintaining ethical standards and ensuring long-term success. Before initiating any scraping project, it’s vital to review the website’s robots.txt file, which outlines the site’s policies regarding automated scraping. Adhering to these guidelines will not only protect you from potential legal issues but also foster a respectful relationship between data scrapers and website owners. Additionally, always respect the site’s terms of service concerning data usage and frequency of scraping; excessive requests can overwhelm the server and lead to temporary or permanent bans.

Another important best practice involves optimizing your code for efficiency and clarity. This can include using Python’s built-in functionalities and libraries intelligently to minimize requests and process data effectively once extracted. Employing techniques such as caching data locally can significantly reduce server load and speed up your scraping tasks. Furthermore, documenting your code and scraping strategies can serve as a valuable resource for future projects, helping others understand your methods and potentially improving your workflow. Following these best practices not only enhances your scraping skills but also builds credibility within the data analysis community.

Data Extraction Methods in Web Scraping

Understanding the different data extraction methods is essential for any web scraping project. The primary technique involves web crawling, which systematically navigates through web pages, fetching data as it goes. This approach is effective for gathering information from multiple sources, enabling comprehensive datasets for analysis. Techniques such as XPath and CSS selectors are commonly used within scraping frameworks to target specific HTML elements, ensuring that the data extracted is both relevant and structured. Utilizing these methods can improve the quality of the data collected and streamline the analysis process.

Another key method in data extraction is the use of API requests when available. Many websites offer APIs that can provide data in a structured format, such as JSON or XML, which can be far easier to manipulate than scraped HTML. Using APIs not only simplifies the extraction process but also adheres to the intended use of the data as set by the website. However, when APIs are not an option, robust data extraction methods must be employed to ensure the scraping process is efficient and effective, ultimately yielding the valuable insights needed for business intelligence.

Web Scraping Tutorials for Beginners

For those new to the world of web scraping, engaging with comprehensive tutorials can vastly improve your skills and knowledge. Various online platforms offer step-by-step guides that walk you through the process of setting up your first scraping project. These resources typically cover essential topics such as Python setup, selecting the appropriate libraries, and crafting your initial script to extract data. Many tutorials also include hands-on practice projects, allowing you to apply what you’ve learned in real-world scenarios, solidifying the concepts in a practical way.

Aside from traditional textual tutorials, video guides also offer a visually intuitive approach to learning web scraping. These can often provide insights into troubleshooting common issues and best practices in real-time. By following along with an instructor, beginners can better grasp the nuances of web scraping, such as handling dynamic content with Selenium or leveraging Beautiful Soup for simpler tasks. The combination of theoretical knowledge and practical application through varied forms of tutorials establishes a robust foundation for anyone looking to master web scraping.

Common Uses for Web Scraping in Various Industries

Web scraping has become an invaluable tool across numerous industries, significantly enhancing data-driven decision-making. In the realm of market research, businesses can utilize scraping to monitor competitor pricing, product availability, and consumer reviews, providing insights that can inform pricing strategies and marketing efforts. This competitive intelligence allows companies to respond swiftly to market changes and consumer demands, maintaining an edge in their respective fields. Additionally, sectors like academic research and journalism use web scraping to gather articles, papers, and news updates, facilitating comprehensive research and reporting.

Moreover, e-commerce platforms use web scraping to aggregate product details from various online stores, creating a single platform for consumers to compare prices and products seamlessly. This not only boosts customer convenience but also enhances the shopping experience, making it more informed and efficient. Additionally, the travel industry leverages web scraping to compile data from various flight and hotel booking sites, allowing users to find the best deals in one place. As the demand for aggregated data continues to grow, web scraping methods will likely evolve, adapting to new technologies and changing consumer needs.

Challenges in Web Scraping and How to Overcome Them

While web scraping presents numerous opportunities, it does come with its own set of challenges that practitioners must navigate. One of the primary concerns is dealing with anti-scraping measures implemented by website owners, such as CAPTCHAs, IP blocking, and aggressive rate limiting. These techniques can disrupt scraping plans and require strategizing to find effective workarounds, such as rotating IP addresses or utilizing proxies. Understanding how to identify and approach these obstacles is paramount for those aiming to scrape data efficiently and ethically.

Another challenge lies in managing the variability of web page structures, which can change frequently. Websites can update their layouts or even their underlying technologies, which can result in broken scripts and halted data extraction processes. To combat this, it is essential to write flexible and adaptable scraping code that can handle minor changes in HTML structure. Regularly revisiting your scraping scripts and keeping abreast of changes in target websites can significantly mitigate the impact of these structural challenges, ensuring a smoother and more robust web scraping experience.

Legal and Ethical Considerations in Web Scraping

As web scraping becomes more prevalent, understanding the legal and ethical implications is crucial for practitioners. Many websites have terms of service that explicitly outline the rules surrounding data usage and scraping activities. Ignoring these can lead to legal actions from web hosts or potential bans. Moreover, it is wise to consider the ethical aspects of scraping, such as respecting an individual’s privacy and ensuring that the data collected is used responsibly. Maintaining ethical standards goes beyond just compliance; it also fosters trust within the online community.

Furthermore, ensuring the security of the data you collect is an essential ethical consideration. Data breaches can have severe consequences, both for the scraper and the source website. Implementing cybersecurity best practices to protect the data gathered during scraping activities is paramount. Encrypting sensitive information and following data protection regulations can safeguard against potential legal issues stemming from misuse or exposure of data, establishing a responsible framework for web scraping.

Future Trends in Web Scraping

The future of web scraping is poised to evolve rapidly alongside advancements in technology and the increasing complexity of websites. As artificial intelligence (AI) and machine learning (ML) continue to improve, automated scraping tools are becoming smarter and more efficient, capable of adjusting to changes in web structures without human intervention. This innovation will lead to more reliable data extraction, allowing businesses to leverage real-time data insights and maintain competitiveness in their respective markets. Moreover, as more companies recognize the value of extracting publicly available data, the demand for sophisticated web scraping solutions will undoubtedly grow.

Additionally, with the rise of data privacy concerns and regulations such as GDPR, web scraping may face stricter scrutiny. Ethical data collection practices will thus become a focal point within the industry, prompting the development of tools that prioritize compliance and transparency. Organizations that can navigate these emerging challenges while innovating their scraping methodologies will likely find themselves at the forefront of data-driven strategies in the future. As the landscape of web scraping continues to shift, staying abreast of these trends will be crucial for practitioners looking to harness the full potential of data extraction.

Frequently Asked Questions

What is Web Scraping and how does it work?

Web scraping is the process of programmatically retrieving data from websites. Using web scraping tools, you can extract specific information from web pages to analyze or process. By utilizing data extraction methods, such as parsing HTML with libraries like Beautiful Soup, you can gather valuable insights and valuable data.

What are the best practices for web scraping?

When engaging in web scraping, it is crucial to follow best practices to avoid legal issues. Always check a website’s robots.txt file to understand its scraping policies and limitations. Additionally, respect the website’s terms of service and implement respectful scraping rates to minimize server load.

What tools are available for web scraping?

Several powerful web scraping tools are available that cater to different needs. Some of the most popular ones include Beautiful Soup, a Python library for parsing HTML; Scrapy, an open-source framework for web crawling; and Selenium, which automates web browsers to extract dynamic content. Each tool offers unique features depending on your web scraping project.

How can I learn web scraping basics?

To grasp the basics of web scraping, you can start by exploring web scraping tutorials that provide step-by-step guidance on various data extraction methods. Online courses and coding bootcamps also offer practical examples to familiarize yourself with key concepts and tools, ensuring a solid foundation.

What are the common uses of web scraping in data analysis?

Web scraping is widely used in data analysis for tasks such as collecting data from different sources to identify trends and insights. By aggregating content from various websites, you can conduct market research, monitor competitor pricing, and perform detailed analysis, which enhances decision-making based on factual data.

| Topic | Description |

|---|---|

| What is Web Scraping? | A technique for programmatically retrieving content from web pages to extract specific data for analysis or processing. |

| Common Uses | 1. Data Analysis: Collecting data to derive insights and trends. 2. Market Research: Monitoring competitors’ pricing and offerings. 3. Content Aggregation: Gathering data from various websites for a comprehensive resource. |

| Tools for Web Scraping | 1. Beautiful Soup: A Python library for parsing HTML/XML. 2. Scrapy: An open-source web crawling framework for Python. 3. Selenium: Automates web browsers for scraping dynamic content. |

| Best Practices | Check the website’s robots.txt file and terms of service, and respect policies on scraping frequency and data use. |

Summary

Web Scraping Basics involves understanding the fundamental principles of extracting data from websites. By utilizing various tools and techniques, individuals and businesses can gather valuable insights, conduct market research, and aggregate content effectively. Adhering to best practices ensures that web scraping activities are conducted ethically and aligned with the rules set by webmasters, allowing for sustainable data collection.