Web scraping is a powerful tool for data extraction that empowers users to efficiently collect vast amounts of information from websites. This automated process allows businesses and individuals to tap into valuable data that can drive decisions in areas such as market research, price comparison, and data mining. In this comprehensive guide, we will explore the intricacies of Python web scraping, showcasing popular libraries such as Beautiful Soup and Scrapy to streamline the extraction process. Moreover, we aim to address the importance of ethical web scraping, ensuring that your data collection practices respect the boundaries set by website owners. Join us on this journey as we demystify web scraping and provide practical insights to enhance your skills and ensure compliance with best practices.

Data harvesting from the web, commonly referred to as web scraping, encompasses the automated collection of information from numerous online platforms. This technique is crucial for various applications, including market analysis, competitive research, and real-time monitoring, proving to be an invaluable asset for businesses. By utilizing programming languages like Python, alongside effective frameworks such as Beautiful Soup and Scrapy, users can navigate and gather data effortlessly. As we delve deeper into this topic, we will also emphasize the need for responsible data collection practices, promoting ethical web scraping methodologies. Prepare to unlock the full potential of online data resources while adhering to guidelines that facilitate respectful and effective data extraction.

Understanding Web Scraping: An Overview

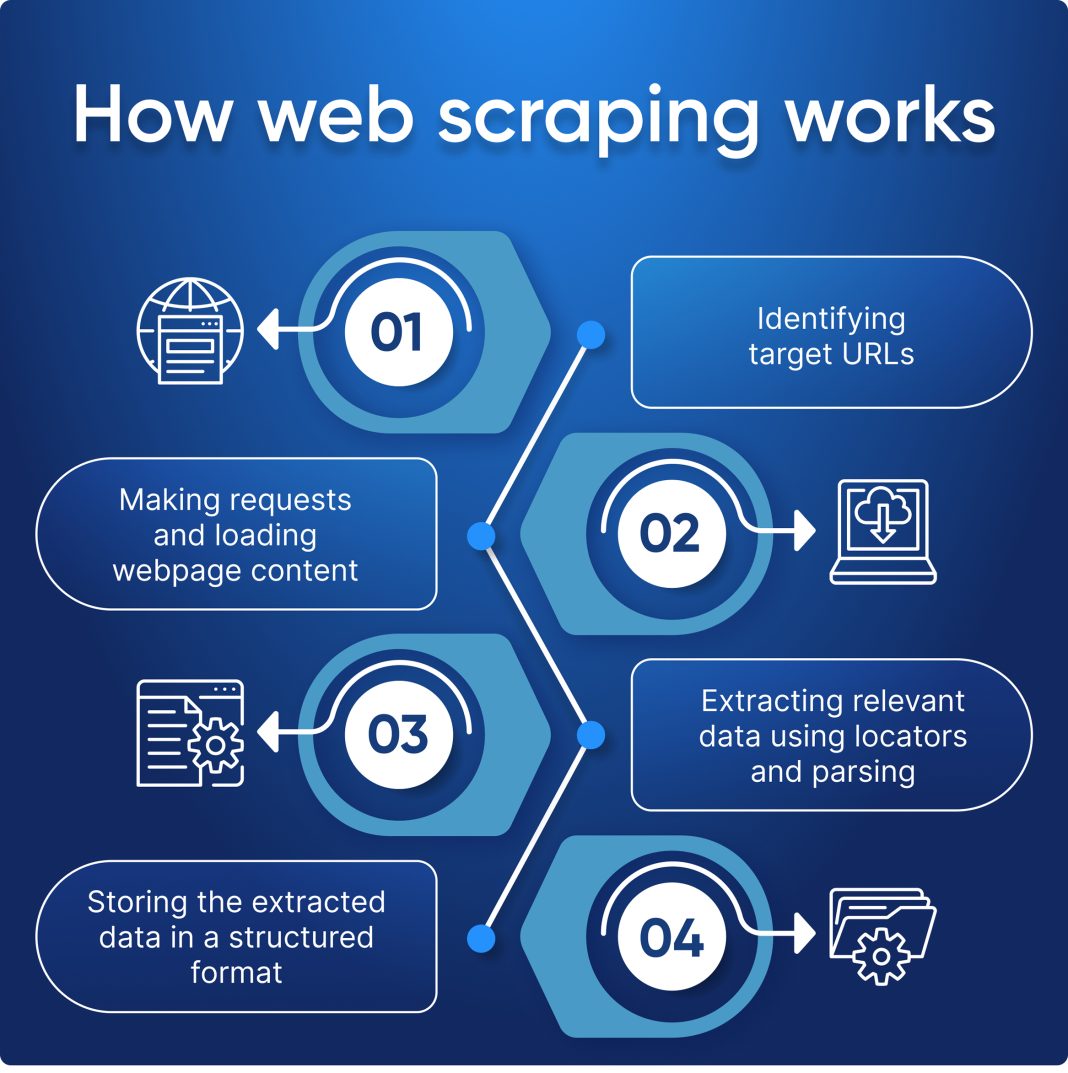

Web scraping has emerged as a powerful tool for data extraction, enabling businesses and individuals to automate the process of gathering information from various online sources. By utilizing software applications designed specifically for this purpose, users can collect large volumes of data efficiently. This method not only saves time but also enhances accuracy, as automated processes minimize the risk of human error. As information continues to grow exponentially on the web, the ability to harness this data through web scraping becomes increasingly valuable.

Moreover, web scraping serves numerous applications, from market research to competitive analysis. For instance, businesses can use web scraping to track prices offered by competitors, allowing them to make informed pricing decisions. Additionally, researchers can mine large datasets for trends and insights that would generally be resource-intensive to acquire manually. As we delve further into this tutorial, we will explore the nuances of web scraping, highlighting the various methodologies and technologies that make it possible.

Introduction to Python Web Scraping

Python has become one of the most popular programming languages for web scraping due to its simplicity and the powerful libraries it offers. Libraries such as Beautiful Soup and Scrapy significantly reduce the complexity of tasks related to data extraction. Beautiful Soup, for example, makes it easy to navigate HTML and XML documents, allowing developers to search for specific tags and elements effortlessly. This library is particularly useful for beginners, as it provides a user-friendly interface to work with structured data.

On the other hand, Scrapy is a more advanced framework that facilitates the development of web scraping applications. It allows for scalable web crawling and can manage multiple pages simultaneously, making it perfect for projects that require extensive data collection. Scrapy’s built-in support for managing requests and handling responses simplifies the scraping process. Throughout this guide, we will provide a step-by-step Beautiful Soup tutorial and a Scrapy guide to help you harness the full potential of Python for your web scraping endeavors.

Beautiful Soup Tutorial: Scraping with Ease

The Beautiful Soup library is designed to make web scraping straightforward, especially for those who are new to programming. With its simple syntax and comprehensive functionality, it facilitates the parsing of HTML and XML documents. To begin scraping data using Beautiful Soup, you must first install the library and familiarize yourself with its core features. The process generally involves sending a request to a web page, receiving the HTML response, and then parsing that response to locate and extract the required data.

Once you have set up Beautiful Soup, the next step is to effectively navigate the parsed document. This involves understanding the structure of the HTML and identifying the specific tags that house the information you want to scrape. Various methods provided by Beautiful Soup, such as `find()`, `find_all()`, and CSS selectors, enable you to locate the data precisely. By following this tutorial, you will learn practical techniques to automate the collection of data using Beautiful Soup, making your web scraping projects far more efficient.

Scrapy Guide: Advanced Web Scraping Techniques

Scrapy is an industrial-strength web scraping framework that extends the functionality of Python for more complex applications. Unlike simpler libraries, Scrapy is designed to handle large-scale web scraping projects, allowing you to crawl multiple websites and gather data concurrently. Its architecture is built around the concept of spiders, which are customized classes responsible for navigating the web and extracting data from various pages. This guide is aimed at helping you set up Scrapy, design your first spider, and understand how to process the data you collect.

One significant advantage of using Scrapy is its built-in support for asynchronous network requests, which drastically improves the speed of data extraction. In addition, Scrapy allows for easy integration with data storage solutions, such as databases and APIs, which means that you can save the scraped data as needed without additional manual steps. By following this Scrapy guide, you will learn how to exploit these features to create robust web scrapers that operate effectively in the face of challenges such as pagination and AJAX-loaded content.

Ethical Web Scraping: Best Practices

While web scraping opens the doors to a wealth of data, it also raises ethical considerations that must be addressed. Practicing ethical web scraping involves respecting the terms and conditions stipulated by websites, avoiding the overload of servers, and ensuring that the collected data is used responsibly. Before scraping a website, it’s vital to review its robots.txt file, which indicates which sections are off-limits for automated access. This helps you to comply with the site’s guidelines and avoid potential legal issues.

In addition, ethical considerations extend to the usage of data once it’s collected. It’s crucial to avoid using scraped data for malicious purposes, such as spamming or identity theft. The principles of proper attribution and copyright should be observed, especially when sharing or publishing findings based on scraped data. By adhering to these ethical web scraping guidelines, you ensure that your data collection efforts contribute positively to the digital landscape.

Applications of Web Scraping in Various Industries

Web scraping is not limited to any single industry; its applications span finance, e-commerce, healthcare, and more. In the finance sector, for instance, traders utilize web scraping to collect real-time data on stock prices and news articles, enabling them to make informed trading decisions quickly. E-commerce businesses harness web scraping tools to compare prices across different platforms, ensuring that they remain competitive in a rapidly changing market.

Similarly, in healthcare, web scraping can be instrumental in gathering data from research publications, clinical trial registries, and patient reviews. By compiling such data, organizations can derive insights that contribute to better healthcare outcomes and improved patient experiences. This versatility emphasizes the importance of web scraping as a fundamental technology, aiding industries in making data-driven decisions.

Navigating Legal Challenges in Web Scraping

As the practice of web scraping becomes more prevalent, understanding the legal landscape surrounding it is essential. In many jurisdictions, scraping publicly available data is generally permissible; however, complications arise when dealing with terms of service agreements and copyright laws. Some websites explicitly prohibit scraping through their terms, and violating these can result in legal action. Therefore, before engaging in web scraping, it is crucial to perform due diligence and ensure compliance with applicable laws.

Moreover, the recent rise in case law surrounding web scraping has prompted the need for businesses to consider risk management strategies. This may include implementing technical measures such as rate limiting to avoid overwhelming target servers, which can help prevent IP bans. By navigating the legal challenges effectively, web scrapers can focus on their data extraction goals while minimizing potential liabilities.

The Future of Web Scraping: Trends and Innovations

Looking ahead, the future of web scraping seems promising, with ongoing advancements in technology potentially changing how data is collected. Machine learning and artificial intelligence are increasingly being integrated into web scraping operations, allowing for smarter algorithms that can adapt to changing website structures. These innovations not only enhance the efficiency and accuracy of data extraction but also open up new possibilities for data analysis and insights.

Additionally, as web scraping continues to gain traction, we are likely to see more robust ethical frameworks and legislative guidelines emerge. Organizations may adopt stricter measures to protect their data, leading to advancements in web scraping technologies that prioritize compliance. By keeping an eye on these trends and innovations, data professionals can adapt their strategies to leverage web scraping effectively while remaining within the boundaries of ethical and legal practices.

Frequently Asked Questions

What is web scraping and how does it work?

Web scraping is the automated process of extracting data from websites. It typically involves sending a request to a web page, retrieving the HTML content, and then using tools like Python libraries such as Beautiful Soup and Scrapy to parse and extract the desired information.

What are the common uses of web scraping?

Web scraping is widely used for various applications including data mining, price comparison, market research, and competitive analysis. By automating data collection, users can gather insights quickly and efficiently.

How do I get started with Python web scraping?

To start with Python web scraping, familiarize yourself with libraries such as Beautiful Soup and Scrapy. They provide powerful tools for parsing HTML and managing requests. Following a structured tutorial will help you understand how to scrape data effectively and ethically.

What is Beautiful Soup and how is it used in web scraping?

Beautiful Soup is a popular Python library used for web scraping. It simplifies the process of navigating and parsing HTML or XML documents, making it easier to extract specific data elements from web pages.

Can you recommend a Scrapy guide for beginners?

Yes! For beginners, the official Scrapy documentation is an excellent resource. It offers a comprehensive Scrapy guide that includes tutorials on building web crawlers, managing data extraction, and handling different web scraping challenges.

What ethical considerations should I keep in mind while web scraping?

Ethical web scraping involves respecting the terms of service of websites, avoiding excessive requests that can overload servers, and ensuring that the data collected is used responsibly and in compliance with legal standards.

What is data extraction and how is it related to web scraping?

Data extraction is the process of retrieving specific data from various sources, including websites through web scraping. In web scraping, data extraction involves obtaining information from web pages for analysis or storage.

Is web scraping legal?

The legality of web scraping varies by jurisdiction and depends on the website’s terms of service. It’s essential to review these terms and adhere to ethical guidelines to ensure compliance.

How can I avoid getting blocked while doing web scraping?

To avoid getting blocked while web scraping, implement techniques such as rotating IP addresses, obeying the website’s robots.txt file, and limiting the frequency of requests to mimic human browsing behavior.

What challenges might I face when using web scraping tools?

Some common challenges in web scraping include dealing with dynamic content, CAPTCHA barriers, and anti-scraping measures that websites implement to protect their data. Additionally, parsing complex HTML structures can pose difficulties.

| Key Points |

|---|

| Web Scraping Definition: The automated process of collecting data from websites. |

| Applications: Common use cases include data mining, price comparison, and market research. |

| Tools: Python libraries such as Beautiful Soup and Scrapy are commonly used for web scraping. |

| Ethical Considerations: It is important to follow ethical guidelines and best practices when scraping websites. |

Summary

Web scraping is a valuable technique that allows users to extract data from websites efficiently. It serves various purposes, such as data mining and market research, highlighting its importance in today’s data-driven world. By utilizing Python libraries like Beautiful Soup and Scrapy, web scraping can be performed easily while adhering to ethical considerations to avoid potential legal issues. Understanding these aspects is vital for anyone looking to leverage web scraping for their projects.