Web scraping is an essential technique that allows individuals and businesses to extract valuable data from websites efficiently. By utilizing various *data scraping tools*, web scraping empowers users to gather large volumes of information that can drive insights and support decision-making processes. The *importance of web scraping* in today’s data-driven environment cannot be overstated, as it enables researchers and analysts to access crucial datasets for studies and market research. However, navigating the landscape of web scraping challenges, such as legal and ethical considerations, is vital to ensure responsible practices. As industries increasingly rely on data, mastering web scraping programming languages like Python and JavaScript can open doors to innovative applications and solutions.

Data harvesting, often referred to as web scraping, is transforming the way we interact with information online. This process involves extracting data from websites to create usable formats, which is invaluable across various sectors, from e-commerce to academia. The relevance of ethical data extraction practices cannot be overlooked, as it ensures compliance with legal frameworks and protects user privacy. As experts explore the intricacies of information retrieval, understanding the different programming languages suited for these tasks can enhance the extraction of critical insights. This growing field highlights the challenges faced by enthusiasts, including website restrictions and the necessity for robust data management.

Understanding Web Scraping: A Comprehensive Overview

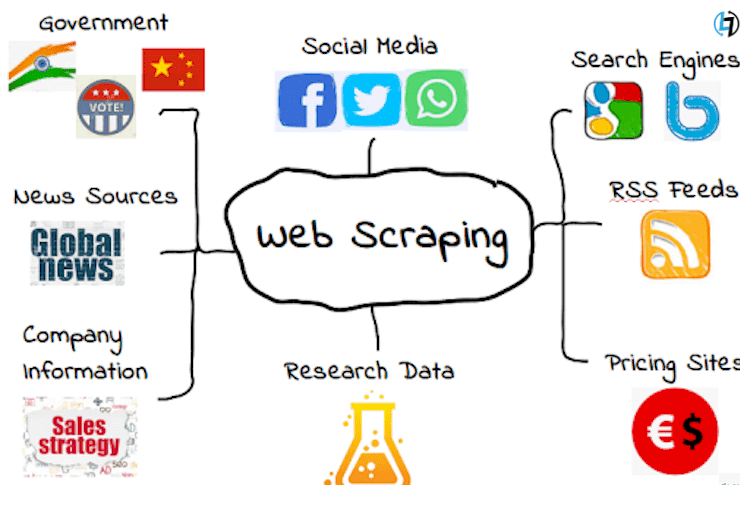

Web scraping, often termed data extraction, refers to the automated process of collecting information from websites. This technique plays a pivotal role in various fields, from market research to academic studies, where data-driven decision-making is crucial. By utilizing web scraping, businesses can gather vast amounts of data quickly and efficiently, enabling them to analyze trends, track competitors, or gain insights into consumer behavior.

As we dive deeper into understanding web scraping, it’s essential to recognize its relevance across different sectors. Industries from e-commerce to finance capitalize on this technology to aggregate data from multiple sources. The versatility of web scraping lies in its ability to adapt to various requirements, making it an indispensable tool for researchers and companies looking to enhance their data collection methods.

The Importance of Web Scraping for Businesses and Researchers

The significance of web scraping cannot be overstated; it serves as a vital lifeline for businesses and researchers alike. By automating the data collection process, web scraping allows organizations to save time and resources that would otherwise be spent on manual data entry or searching for relevant information. This efficiency can lead to better pricing strategies, improved marketing campaigns, and more informed business decisions.

For researchers, web scraping opens up a wealth of possibilities. Access to real-time data helps in conducting thorough analyses and forming comprehensive conclusions based on current trends. Furthermore, ethical web scraping practices ensure that researchers can gather the data they need without infringing on the rights of data owners, maintaining integrity and respect within their research endeavors.

Key Web Scraping Tools and Technologies

When it comes to web scraping, several tools have gained popularity due to their user-friendly interfaces and powerful capabilities. Data scraping tools like Beautiful Soup, Scrapy, and Selenium are essential for parsing HTML and extracting relevant information with ease. Each of these tools brings its unique strengths to the table, allowing users to tailor their scraping efforts based on their specific needs.

Beyond these tools, an array of programming languages also play a crucial role in web scraping projects. Python, with its extensive libraries and rich community support, has emerged as a favorite among developers due to its simplicity and efficiency. JavaScript, particularly employing Node.js, allows for scraping dynamic content from websites that rely on heavy client-side frameworks.

Ethical Considerations in Web Scraping

While web scraping offers numerous advantages, it’s equally important to approach it ethically. Ethical web scraping involves adhering to the terms of service of the websites being scraped, ensuring that your data collection practices do not violate any legal restrictions. This respect for data ownership fosters a better relationship between data scrapers and website owners, ensuring that the web scraping community maintains a good standing.

Moreover, ethical considerations extend beyond just adhering to legalities. Scrapers must be mindful of how they handle and store data. Implementing secure methods for managing sensitive information and being transparent about data usage promotes trust and accountability in data-related tasks.

Overcoming Web Scraping Challenges

Despite its advantages, web scraping comes with its own set of challenges that practitioners must navigate. One common obstacle involves dealing with CAPTCHA systems that prevent automated bots from accessing data. Developers must employ tactics such as CAPTCHA-solving services or human verification to circumvent these barriers, adding complexity to the scraping process.

Another challenge lies in the constantly changing structure of websites. As websites update their designs and layouts, scrapers must evolve accordingly to successfully extract data. This requires continuous monitoring and updating of the scraping scripts to ensure they can adapt to any changes without losing functionality.

Popular Programming Languages for Web Scraping

When it comes to implementing web scraping projects, programming languages like Python and JavaScript are at the forefront due to their flexibility and rich libraries. Python stands out for its scalability and ease of use, making it ideal for both beginners and seasoned developers. With libraries like Requests and Beautiful Soup, Python offers a robust environment for quickly developing effective scrapers.

On the other hand, JavaScript proves invaluable for scraping web applications that rely on dynamic content. Libraries like Puppeteer enable developers to interact with websites just as a user would, allowing for the extraction of data rendered through client-side scripting. This capability is essential for scraping comprehensive data from modern web applications.

Staying Updated in the Web Scraping Landscape

The field of web scraping is not static; it continuously evolves with technological advancements and shifts in data privacy regulations. Therefore, it’s crucial for web scraping professionals to stay informed about the latest developments, tools, and best practices. Engaging with online communities, attending webinars, and reading industry blogs can provide valuable insights into emerging trends and technologies.

Moreover, by staying updated, scrapers can better navigate challenges associated with web scraping. Understanding new legal frameworks and technological innovations helps ensure that scraping activities remain compliant and effective. Emphasizing continuous learning and adaptation serves not only to enhance individual skills but also to ensure the long-term sustainability of web scraping projects.

Best Practices for Effective Web Scraping

Implementing best practices in web scraping is essential for maximizing efficiency and minimizing risks. One primary approach is to establish a well-structured and documented scraping process. This involves defining clear objectives, selecting appropriate tools, and ensuring that all steps are properly recorded. By doing so, developers can streamline their workflows and quickly troubleshoot any issues that arise during scraping.

Additionally, respecting the robots.txt file of target websites is a fundamental aspect of ethical web scraping. This file outlines the pages where scraping is allowed or prohibited. By adhering to these guidelines, scrapers can avoid potential legal conflicts and maintain a positive rapport with website owners. Alongside this, implementing strategies to manage scraping frequency can prevent overloading servers, which might lead to IP bans.

The Future of Web Scraping: Trends to Watch

As the digital landscape continues to evolve, so does the practice of web scraping. Looking ahead, one significant trend is the incorporation of Artificial Intelligence (AI) and Machine Learning (ML) techniques into data scraping. These technologies promise to enhance the accuracy and efficiency of web scrapers by enabling them to learn and adapt to new patterns in data presentation.

Moreover, increased emphasis on data privacy and protection laws will shape the future of web scraping. With stricter regulations like GDPR and CCPA on the rise, web scrapers must prioritize compliance and ethical practices. Understanding these legal frameworks will not only safeguard developers from potential legal issues but also foster ethical data handling in the industry.

Frequently Asked Questions

What is web scraping and why is it important?

Web scraping is the automated process of extracting data from websites. It is important because it enables businesses and researchers to gather large volumes of information quickly and efficiently, facilitating decision-making and data analysis. By leveraging web scraping, organizations can analyze market trends, monitor competitors, and acquire necessary data without manual collection.

What are some common programming languages used for web scraping?

Some popular programming languages for web scraping include Python, JavaScript, and Ruby. Python is particularly favored due to its powerful libraries like Beautiful Soup and Scrapy, which simplify data extraction and parsing from HTML documents. JavaScript, with the help of libraries such as Puppeteer, allows for scraping dynamic content rendered by browsers.

What are the ethical considerations of web scraping?

Ethical web scraping involves respecting the terms of service of websites, ensuring that scraping does not overload servers, and maintaining user privacy. It is crucial to handle and store the scraped data responsibly, as well as to be aware of the legal implications that may arise from unauthorized data collection.

What are some challenges faced in web scraping?

Web scraping can present several challenges, such as dealing with CAPTCHAs, which can block bots from accessing data, handling pagination to ensure all relevant pages are scraped, and navigating through dynamically loaded content that can complicate data extraction. Additionally, anti-scraping technologies employed by websites can hinder the scraping process.

What tools can be used for web scraping?

There are various data scraping tools available for web scraping, including Scrapy, Beautiful Soup, Octoparse, and Import.io. These tools offer user-friendly interfaces and powerful functionalities, enabling both novice and experienced developers to extract and manipulate web data efficiently.

How can I start ethical web scraping projects?

To start ethical web scraping projects, first ensure you understand and comply with the target website’s terms of service. Use reputable scraping tools and libraries, implement rate limiting to avoid overwhelming the server, and prioritize transparency regarding the data you collect. Lastly, stay informed about evolving ethical guidelines and legal standards in web scraping.

Why is staying updated on web scraping techniques important?

Staying updated on web scraping techniques is essential due to the continuously changing nature of the web. Websites frequently alter their structures and deploy new anti-scraping measures. By keeping abreast of advancements in scraping tools and programming languages, you can improve your web scraping efficiency and adapt to new challenges promptly.

| Key Point | Details |

|---|---|

| Definition of Web Scraping | Web scraping is the process of automating the extraction of data from websites. |

| Importance | It is crucial for businesses and researchers as it facilitates efficient data collection. |

| Popular Languages | Python and JavaScript are among the top programming languages used for creating web scrapers. |

| Legal Considerations | Respect website terms of service, and ensure proper data handling and storage. |

| Technical Challenges | Includes parsing HTML, handling pagination, and overcoming CAPTCHA challenges. |

| Continuous Learning | Staying updated on tools and techniques is essential for effective web scraping. |

Summary

Web scraping is an invaluable technique for data collection that helps businesses and researchers gather insights efficiently. As discussed, it involves automating data extraction from websites, which can provide significant advantages if done ethically and legally. By incorporating popular programming languages such as Python or JavaScript, scrapers can overcome various challenges like pagination and CAPTCHAs. It’s crucial to remain informed about the constantly evolving landscape of web scraping to enhance effectiveness and ensure compliance with legal standards. By understanding these key aspects, individuals and organizations can responsibly harness the power of web scraping.