Web scraping has emerged as a vital technique in the digital age, enabling users to gather vast amounts of information from websites quickly and efficiently. By utilizing web scraping, individuals and businesses can transform unstructured data into valuable insights, making informed decisions based on market trends or consumer behavior. However, it’s critical to navigate the web scraping landscape with an understanding of its legality, ensuring compliance with website policies and ethical guidelines. Adhering to best practices in web scraping not only protects your data extraction efforts but also promotes a responsible approach to data collection. With a plethora of tools for web scraping available to streamline the process, mastering web scraping techniques can significantly enhance your data analysis capabilities.

Data extraction from web sources, commonly referred to as web harvesting, is a growing field that empowers users to collect information from the vast expanse of the Internet. Different methodologies, including automated collection processes, enable efficient retrieval of data while maintaining compliance with ethical standards and legal requirements. Whether you’re interested in market research or content aggregation, understanding the nuances of data scraping will aid in effectively utilizing online resources. Tools designed for web extraction, from programming libraries to user-friendly online platforms, cater to various skill levels and project needs. Overall, mastering these data collection strategies opens a gateway to harnessing the power of web-based information.

Understanding Web Scraping Techniques

Web scraping techniques vary depending on the target website and the type of data being extracted. Some basic methods include parsing HTML content, using APIs, and leveraging browser automation tools. HTML scraping requires knowledge of the Document Object Model (DOM) and methods to traverse the structure to retrieve relevant information. APIs, on the other hand, provide a more straightforward method of obtaining structured data, often in a format like JSON. However, browser automation comes into play when dealing with dynamic content generated by JavaScript, which typically requires tools like Puppeteer to simulate user behavior.

Moreover, advanced techniques such as headless browsing can enhance the scraping process, allowing you to run scripts without the need for a graphical interface. Tools like Selenium enable testers to automate browsers, making it easier to navigate complex site architectures. Understanding these web scraping techniques is crucial for developers looking to optimize their data extraction efforts and yield more accurate analysis results.

The Legal Aspects of Web Scraping

The legality of web scraping is a hotly debated topic among developers and legal experts alike. While scraping publicly available data is not illegal, there are significant nuances to consider. Many websites utilize terms of service agreements that explicitly prohibit unauthorized data extraction. Additionally, legal precedents such as the LinkedIn vs. hiQ case have highlighted the complexities around accessing user-generated data and the implications of violating such terms. Therefore, it is vital for web scrapers to review the applicable laws and ensure they do not infringe on copyright or privacy rights.

Moreover, the robots.txt file serves as a guideline for what portions of a site can be crawled or scraped. Ignoring these directives not only risks bans from the website but can also lead to potential legal action. It is always advisable to operate within the legal framework by respecting these specifications and employing ethical data usage practices, particularly when handling sensitive information or user data.

Best Practices for Ethical Web Scraping

When embarking on a web scraping project, adhering to best practices is essential for ethical and effective data extraction. Chief among these is paying close attention to the site’s robots.txt file. This file outlines the permissions for crawling different parts of a website and should be reviewed prior to initiating any scraping activities. Ignoring these guidelines not only jeopardizes your access to the site but could also lead to legal consequences.

In addition to legal considerations, it’s critical to implement rate limiting in your scraping operations. Sending too many requests in a short period can overwhelm the server, leading to IP bans or degraded performance for users. Utilizing sleep intervals between requests and ensuring a respectful scraping frequency can help maintain both site integrity and your credibility as a developer.

Essential Tools for Effective Web Scraping

The choice of tools can significantly influence the success of web scraping endeavors. Python remains a dominant language for scraping due primarily to its robust libraries such as Beautiful Soup, Scrapy, and Requests. Each of these libraries facilitates different aspects of data extraction; for instance, Beautiful Soup excels at parsing HTML, while Scrapy is designed for large-scale web crawling sessions.

For those dealing with dynamic websites, tools like Puppeteer are invaluable, enabling users to scrape content that requires interaction with JavaScript. Additionally, user-friendly online tools like Octoparse and ParseHub are popular choices among non-technical users due to their intuitive interfaces. These tools abstract much of the underlying complexity, allowing users to focus on the data extraction process instead of coding intricacies.

Data Extraction Considerations

Data extraction is the essence of web scraping, and understanding the type of data you wish to collect is fundamental to the process. Effective data extraction starts with identifying your goals and determining what constitutes relevant information. For instance, are you looking for structured data like tables or unstructured data such as text and images? This will dictate the approach and tools you employ.

Furthermore, it’s vital to clean and organize the extracted data post-collection. Raw data often requires trimming, formatting, and structuring to be useful. Effective data cleaning techniques, such as removing duplicates and standardizing formats, can transform your raw data into a reliable resource for analysis or decision-making.

Tips for Successful Web Scraping Projects

Launching a successful web scraping project involves meticulous planning and foresight. Setting clear objectives at the outset can streamline your data collection process, ensuring that you remain focused on the end goal. Consider drafting a clear project outline that includes your target website, the data you aim to extract, and specific metrics for measuring your success.

Moreover, testing your scraping scripts is essential for success. This includes running trials to identify any issues with data retrieval or formatting. By proactively addressing potential problems before full-scale implementation, you can mitigate risks and enhance the efficiency of your scraping operations.

Navigating Challenges in Web Scraping

Despite its many advantages, web scraping comes with a host of challenges that can hinder successful execution. Websites may implement anti-scraping technologies, such as CAPTCHAs and IP blocking, to shield their data from unauthorized access. As a result, it’s crucial to develop strategies that allow you to navigate these obstacles effectively, ensuring the continuity of your data collection efforts.

Furthermore, website structures can change frequently, which may disrupt established scraping scripts. Regular monitoring and updating of your web scraping strategies are necessary to maintain efficiency and efficacy. Adaptability in the face of changing web standards is key to sustained success in web scraping.

Real-World Applications of Web Scraping

Web scraping finds utility across various industries, serving as a potent tool for data-driven decision-making. In the e-commerce sector, businesses scrape competitor pricing to remain competitive and adjust their offerings accordingly. Additionally, data aggregation sites utilize scraping to compile information on a range of topics, from airline tickets to hotel availability.

Beyond commercial applications, web scraping is also employed in academic research to gather data sets for analysis. Researchers often scrape websites to acquire large volumes of data for studies, facilitating insights across numerous disciplines. As the breadth of applications continues to expand, the versatility and importance of web scraping in the digital age cannot be understated.

The Future of Web Scraping

As technology evolves, so too does the landscape of web scraping. The increasing prevalence of APIs, coupled with advancements in AI and machine learning technology, is likely to shape the future of data extraction practices. Automated systems that self-learn may emerge, greatly enhancing the efficiency and accuracy of web scraping tasks.

However, as the field grows, so does the scrutiny on its ethical application. Companies and data practitioners must remain vigilant about the impact of web scraping on privacy and data protection regulations. Ensuring compliance with emerging laws and best practices will be critical as web scraping continues to develop as a significant method for data extraction.

Frequently Asked Questions

What is web scraping?

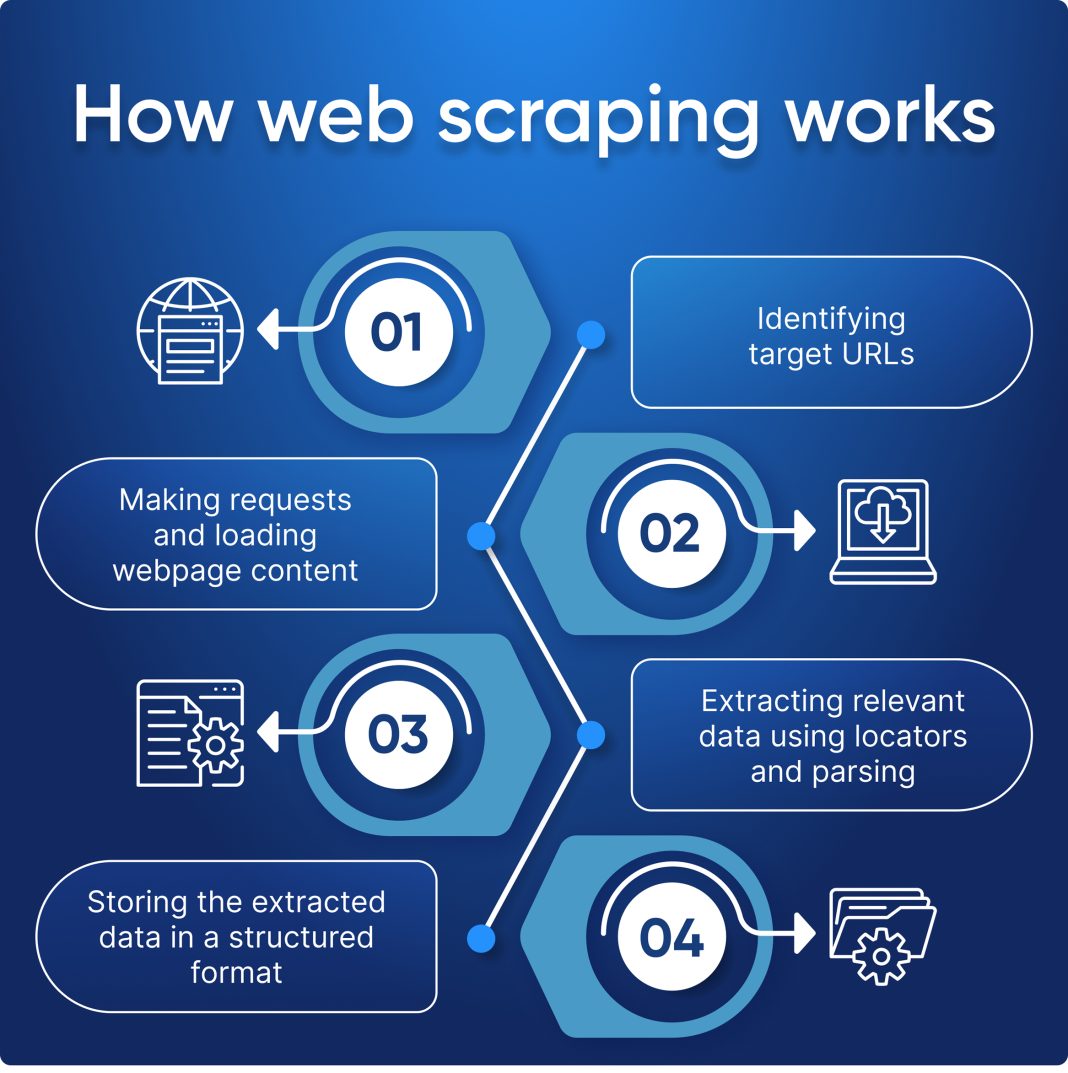

Web scraping is the automated process of collecting information from websites. It enables users to extract data from web pages and convert it into a structured format for further analysis or storage.

Is web scraping legal?

The legality of web scraping depends on various factors, including a website’s `robots.txt` file and its terms of service. Always check these guidelines before scraping to ensure compliance and avoid potential legal issues.

What are the best practices for web scraping?

Best practices for web scraping include respecting the directives in the `robots.txt` file, implementing rate limiting to avoid server overload, and using scraped data ethically, particularly for commercial purposes.

What tools are available for web scraping?

There are several effective tools for web scraping, including Python libraries like Beautiful Soup and Scrapy, JavaScript tools like Puppeteer, and online applications such as Octoparse and ParseHub that offer user-friendly interfaces for data extraction.

What web scraping techniques should I know?

Key web scraping techniques include HTML parsing, DOM navigation, and utilizing APIs where available. Understanding these methods will enhance your ability to extract and process data efficiently.

How do I choose the right tool for web scraping?

Choosing the right tool for web scraping depends on your technical skills and the complexity of the data you wish to extract. For beginners, user-friendly tools like Octoparse are ideal, while more experienced users might prefer programming libraries like Scrapy or libraries in Python.

Can web scraping be used for data extraction from APIs?

Yes, while web scraping typically involves extracting data directly from HTML pages, it can also include accessing APIs to gather structured information efficiently. When available, APIs are often much cleaner and more reliable sources for data extraction.

What common challenges are faced in web scraping?

Common challenges in web scraping include dealing with dynamic content, CAPTCHAs, rate limiting from servers, and ensuring that your scraping techniques comply with the legal restrictions outlined in a site’s terms of service.

| Key Point | Details |

|---|---|

| What is Web Scraping? | Automated process of collecting information from websites. It transforms data from web pages into a structured format for analysis or storage. |

| Legality of Web Scraping | Check ‘robots.txt’ file of websites to know what can be scraped. Comply with the website’s terms of service. |

| Best Practices for Web Scraping | 1. Respect Robots.txt; 2. Implement Rate Limiting to avoid server overload; 3. Be ethical with the usage of scraped data. |

| Tools for Web Scraping | Popular tools include: Python (Beautiful Soup, Scrapy), JavaScript (Puppeteer, Cheerio), Online tools (Octoparse, ParseHub). |

Summary

Web scraping is a powerful technique for automating data collection from the web. Understanding its legal implications, following best practices, and employing the right tools is crucial for successful implementation. Whether using Python libraries or user-friendly online tools, web scraping can unlock vast opportunities for data analysis and research.